The newly launched Apache Spark troubleshooting agent can get rid of hours of handbook investigation for information engineers and scientists working with Amazon EMR or AWS Glue. As a substitute of navigating a number of consoles, sifting by intensive log information, and manually analyzing efficiency metrics, now you can diagnose Spark failures utilizing easy pure language prompts. The agent mechanically analyzes your workloads and delivers actionable suggestions. remodeling a time-consuming troubleshooting course of right into a streamlined, environment friendly expertise.

On this publish, we present you the way the Apache Spark troubleshooting agent helps analyze Apache Spark points by offering detailed root causes and actionable suggestions. You’ll learn to streamline your troubleshooting workflow by integrating this agent together with your present monitoring options throughout Amazon EMR and AWS Glue.

Apache Spark powers vital ETL pipelines, real-time analytics, and machine studying workloads throughout hundreds of organizations. Nevertheless, constructing and sustaining Spark functions stays an iterative course of the place builders spend vital time troubleshooting. Spark utility builders encounter operational challenges due to some completely different causes:

- Complicated connectivity and configuration choices to quite a lot of assets with Spark – Though this makes Spark a preferred information processing platform, it usually makes it difficult to seek out the foundation explanation for inefficiencies or failures when Spark configurations aren’t optimally or appropriately configured.

- Spark’s in-memory processing mannequin and distributed partitioning of datasets throughout its employees – Though good for parallelism, this usually makes it tough for customers to establish inefficiencies. This ends in gradual utility execution or root explanation for failures attributable to useful resource exhaustion points corresponding to out of reminiscence and disk exceptions.

- Lazy analysis of Spark transformations – Though lazy analysis optimizes efficiency, it makes it difficult to precisely and rapidly establish the applying code and logic that triggered the failure from the distributed logs and metrics emitted from completely different executors.

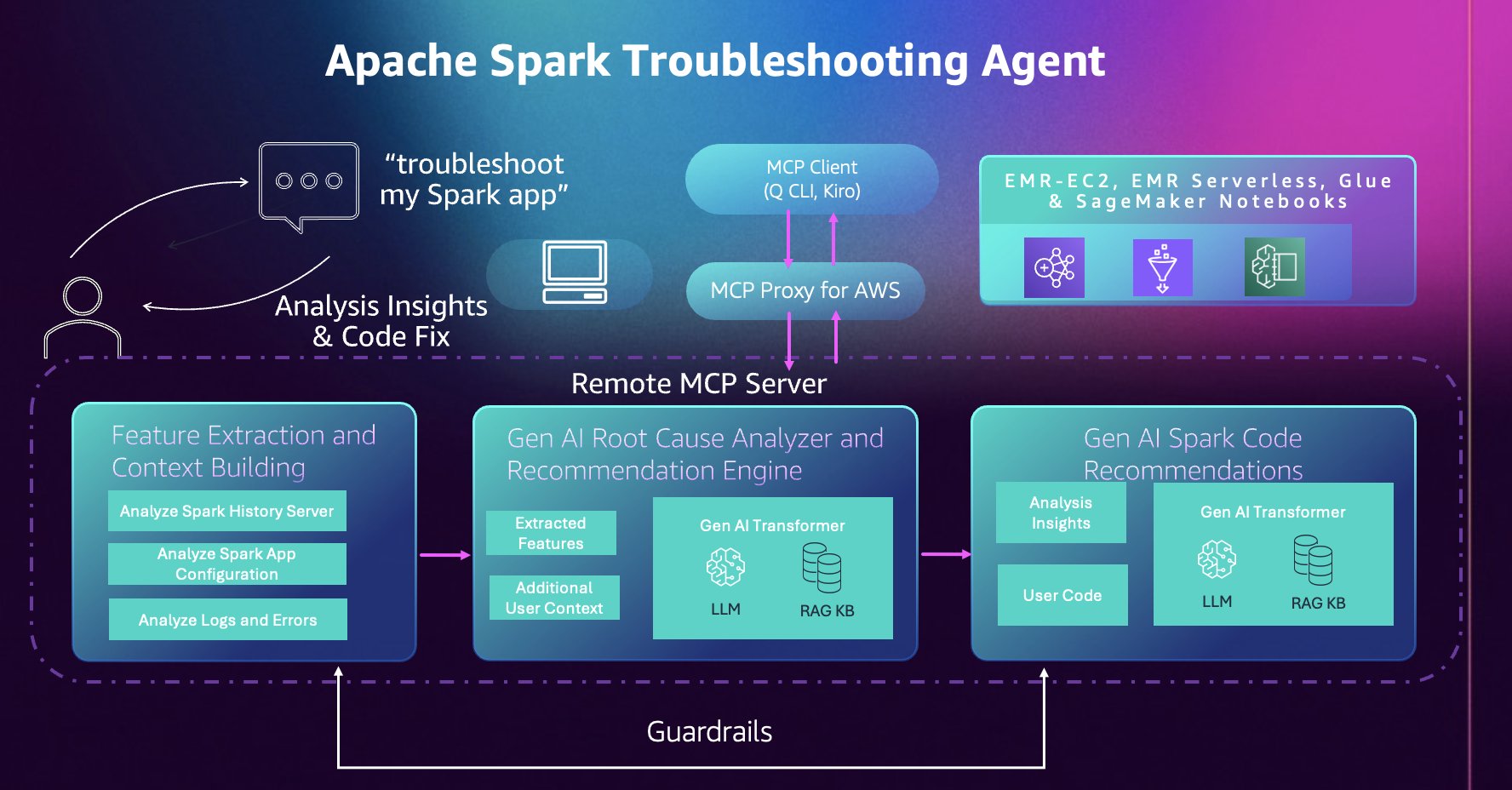

Apache Spark troubleshooting agent structure

This part describes the parts of the troubleshooting agent and the way they connect with your growth atmosphere. The troubleshooting agent supplies a single conversational entry level on your Spark functions throughout Amazon EMR, AWS Glue, and Amazon SageMaker Notebooks. As a substitute of navigating completely different consoles, APIs, and log places for every service, you work together with one Mannequin Context Protocol (MCP) server by pure language utilizing any MCP-compatible AI assistant of your alternative, together with customized brokers you develop utilizing frameworks corresponding to Strands Brokers.

Working as a completely managed cloud-hosted MCP server, the agent removes the necessity to preserve native servers whereas preserving your information and code remoted and safe in a single-tenant system design. Operations are read-only and backed by AWS Id and Entry Administration (IAM) permissions; the agent solely has entry to assets and actions your IAM function grants. Moreover, instrument calls are mechanically logged to AWS CloudTrail, offering full auditability and compliance visibility. This mixture of managed infrastructure, granular IAM controls, and CloudTrail integration confirms your Spark diagnostic workflows stay safe, compliant, and totally auditable.

The agent builds on years of AWS experience working hundreds of thousands of Spark functions at scale. It mechanically analyzes Spark Historical past Server information, distributed executor logs, configuration patterns, and error stack traces and extracts related options and alerts to floor insights that will in any other case require handbook correlation throughout a number of information sources and deep understanding of Spark and repair internals.

Getting began

Full the next steps to get began with the Apache Spark troubleshooting agent.

Conditions

Confirm you meet or have accomplished the next conditions.

System necessities:

- Python 3.10 or greater

- Set up the uv bundle supervisor. For directions, see putting in uv.

- AWS Command Line Interface (AWS CLI) (model 2.30.0 or later) put in and configured with applicable credentials.

IAM permissions: Your AWS IAM profile wants permissions to invoke the MCP server and entry your Spark workload assets. The AWS CloudFormation template within the setup documentation creates an IAM function with the required permissions. You can even manually add the required IAM permissions.

Arrange utilizing AWS CloudFormation

First, deploy the AWS CloudFormation template offered within the setup documentation. This template mechanically creates the IAM roles with the permissions required to invoke the MCP server.

- Deploy the template throughout the similar AWS Area you run your workloads in. For this publish, we’ll use us-east-1.

- From the AWS CloudFormation Outputs tab, copy and execute the atmosphere variable command:

- Configure your AWS CLI profile:

Arrange utilizing Kiro CLI

You need to use Kiro CLI to work together with the Apache Spark troubleshooting agent immediately out of your terminal.

Set up and configuration:

- Set up Kiro CLI.

- Add each MCP servers, utilizing the atmosphere variables from the earlier Arrange utilizing AWS CloudFormation part:

- Confirm your setup by working the

/instrumentscommand in Kiro CLI to see the obtainable Apache Spark troubleshooting instruments.

Arrange utilizing Kiro IDE

Kiro IDE supplies a visible growth atmosphere with built-in AI help for interacting with the Apache Spark troubleshooting agent.

Set up and configuration:

- Set up Kiro IDE.

- MCP configuration is shared throughout Kiro CLI and Kiro IDE. Open the command palette utilizing

Ctrl + Shift + P(Home windows / Linux) orCmd + Shift + P(macOS) and Seek forKiro: Open MCP Config - Confirm the contents of your

mcp.jsonmatch the Arrange utilizing Kiro CLI part.

Utilizing the troubleshooting agent

Subsequent, we offer 3 reference architectures for options to make use of the troubleshooting agent in your present workflows with ease. We additionally present the reference code and AWS CloudFormation templates for these architectures within the Amazon EMR Utilities GitHub repository.

Answer 1 – Conversational troubleshooting: Troubleshooting failed Apache Spark functions with Kiro CLI

When Spark functions fail throughout your information platform, your debugging strategy would sometimes contain navigating completely different consoles for Amazon EMR, Amazon EC2, Amazon EMR Serverless, and AWS Glue, manually reviewing Spark Historical past Server logs, checking error stack traces, analyzing useful resource utilization patterns, then correlating this data to seek out the foundation trigger and repair. The Apache Spark troubleshooting agent automates this complete workflow by pure language, offering a unified troubleshooting expertise throughout the three platforms. Merely describe your failed functions, for instance:

The agent mechanically extracts Spark occasion logs and metrics, analyzes the error patterns, and supplies a transparent root trigger clarification together with suggestions, all by the identical conversational interface. The next video demonstrates the whole troubleshooting workflow throughout Amazon EMR-EC2, Amazon EMR Serverless, and AWS Glue utilizing Kiro CLI:

Answer 2 – Agent-driven notifications: Combine the Apache Spark troubleshooting agent right into a monitoring workflow

Along with troubleshooting from the command line, the troubleshooting agent can plug into your monitoring infrastructure to supply improved failure notifications.

Manufacturing information pipelines require instant visibility when failures happen. Conventional monitoring programs can warn you when a Spark job fails, however diagnosing the foundation trigger nonetheless requires handbook investigation and an evaluation of what went incorrect earlier than remediation can start.

With the Apache Spark troubleshooting agent, you may combine it into your present monitoring workflows to obtain root causes and suggestions as quickly as you obtain a failure notification. Right here, we reveal two integration patterns that lead to automated root trigger evaluation inside your present workflows.

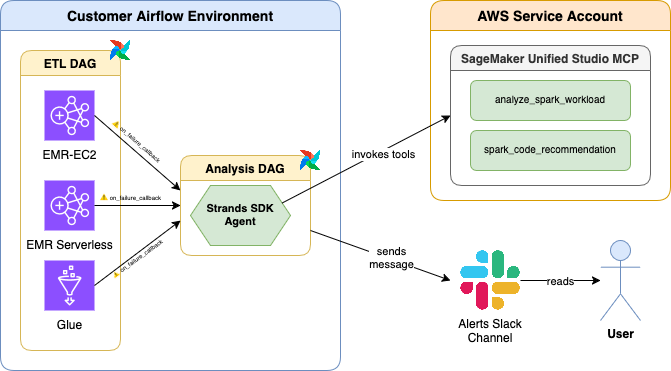

Apache Airflow Integration

This primary integration sample makes use of Apache Airflow callbacks to mechanically set off troubleshooting when Spark job operators fail.

When any Amazon EMR, Amazon EC2, Amazon EMR Serverless, or AWS Glue job operator fails in an Apache Airflow DAG,

- A callback invokes the Spark troubleshooting agent inside a separate DAG.

- The Spark troubleshooting agent analyzes the difficulty, establishes the foundation trigger, and identifies code repair suggestions.

- The Spark troubleshooting agent sends a complete diagnostic report back to a configured Slack channel.

The answer is offered within the Amazon EMR Utilities GitHub repository (documentation) for instant integration into your present Apache Airflow deployments with a 1-line change to your Airflow DAGs. The next video demonstrates this integration:

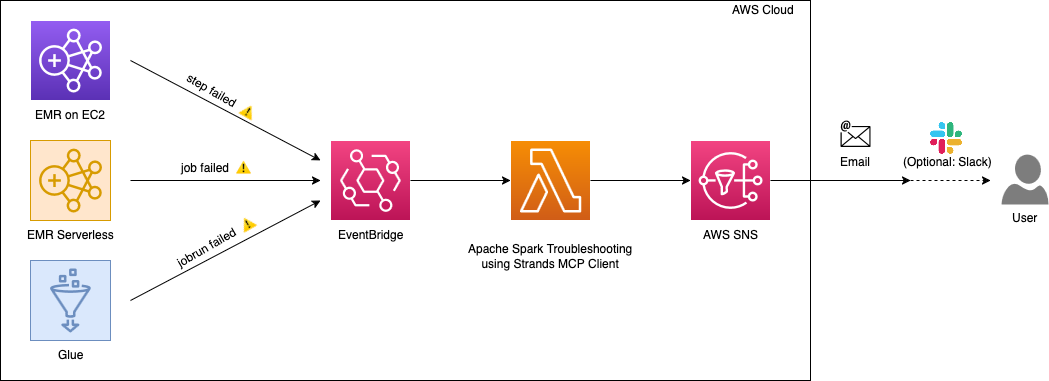

Amazon EventBridge integration

For event-driven architectures, this second sample makes use of Amazon EventBridge to mechanically invoke the troubleshooting agent when Spark jobs fail throughout your AWS atmosphere.

This integration makes use of an AWS Lambda operate that interacts with the Apache Spark troubleshooting agent by the Strands MCP Consumer.

When Amazon EventBridge detects failures from Amazon EMR-EC2 steps, Amazon EMR Serverless job runs, or AWS Glue job runs, it triggers the AWS Lambda operate which:

- Makes use of the Apache Spark troubleshooting agent to research the failure

- Identifies the foundation trigger and generates code repair suggestions

- Constructs a complete evaluation abstract

- Sends the abstract to Amazon SNS

- Delivers the evaluation to your configured locations (e-mail, Slack, or different SNS subscribers)

This serverless strategy supplies centralized failure evaluation throughout all of your Spark platforms with out requiring adjustments to particular person pipelines. The next video demonstrates this integration:

A reference implementation of this answer is offered within the Amazon EMR Utilities GitHub repository (documentation).

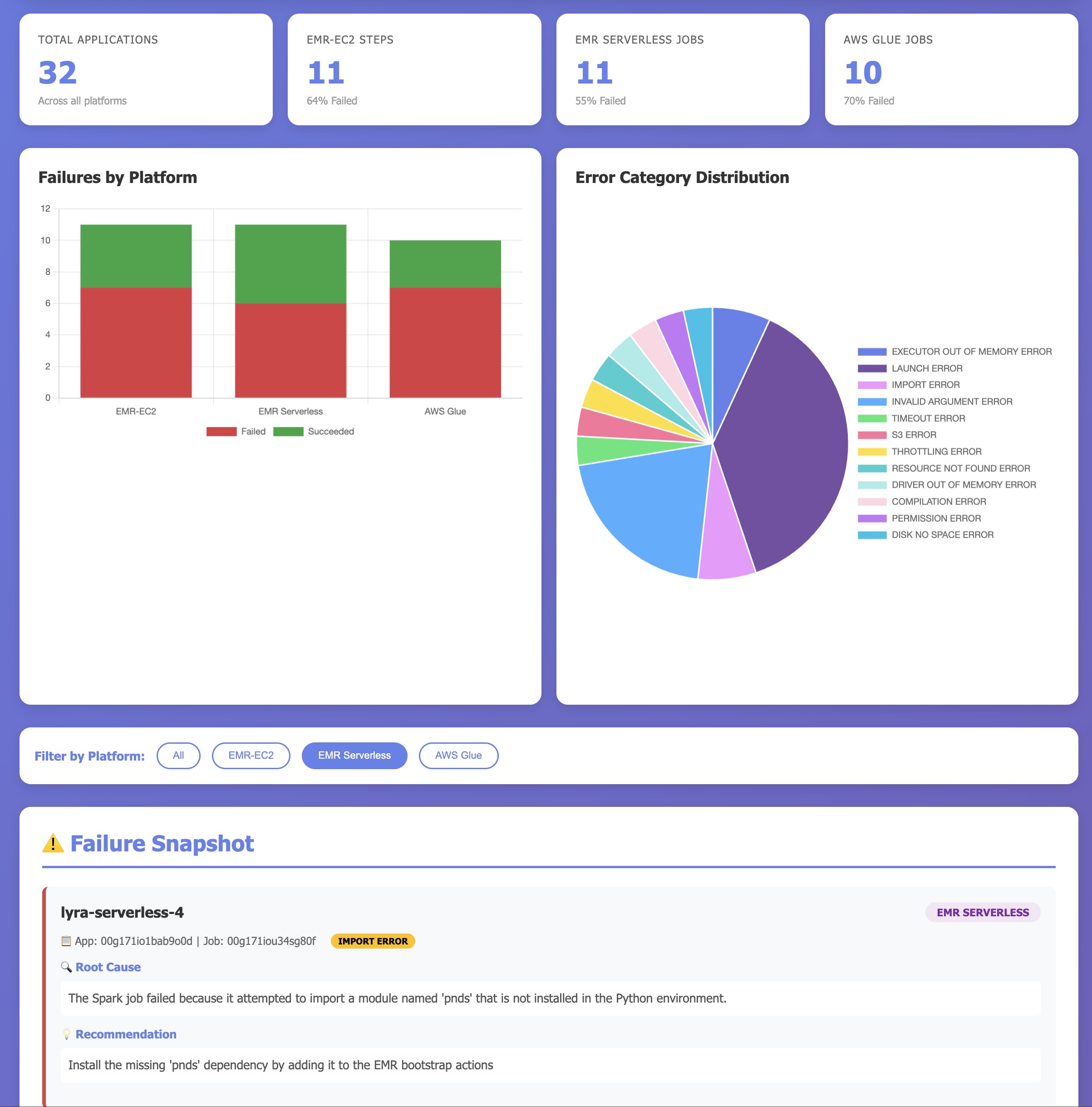

Answer 3 – Clever Dashboards: Use the Apache Spark troubleshooting agent with Kiro IDE to visualise account degree utility failures: what failed, why failed and the best way to repair

Understanding the well being of your Spark workloads throughout a number of platforms requires consolidating information from Amazon EMR (each EC2 and Serverless) and AWS Glue. Groups sometimes construct customized monitoring options by writing scripts to question a number of APIs, mixture metrics, and generate stories which might be time consuming and require lively upkeep.

With Kiro IDE and the Apache Spark troubleshooting agent, you may construct complete monitoring dashboards conversationally. As a substitute of writing customized code to mixture workload metrics, you may describe what you need to monitor, and the agent generates an entire dashboard exhibiting general efficiency metrics, error class distributions for failures, success charges throughout platforms, and important failures requiring instant consideration. In contrast to conventional dashboards that solely present conventional KPIs and metrics on what utility failed, this dashboard makes use of the Spark troubleshooting agent to supply insights to customers on why the functions failed, and how they are often fastened. The next video demonstrates constructing a multi-platform monitoring dashboard utilizing Kiro IDE:

The immediate used throughout the demo:

Clear up

To keep away from incurring future AWS costs, delete the assets you created throughout this walkthrough:

- Delete the AWS CloudFormation stack.

- When you created an Amazon EventBridge rule for integration, delete these assets.

Conclusion

On this publish, we demonstrated how the Apache Spark troubleshooting agent transforms hours of handbook investigation into pure language conversations, considerably decreasing troubleshooting time from hours to minutes and making Spark experience accessible to all. By integrating pure language diagnostics into your present growth instruments—whether or not Kiro CLI, Kiro IDE, or different MCP-compatible AI assistants—your groups can give attention to constructing progressive functions as a substitute of debugging failures.

Particular thanks

A particular due to everybody who contributed from engineering and science to the launch of the Spark troubleshooting agent and the distant MCP service: Tony Rusignuolo, Anshi Shrivastava, Martin Ma, Hirva Patel, Pranjal Srivastava, Weijing Cai, Rupak Ravi, Bo Li, Vaibhav Naik, XiaoRun Yu, Tina Shao, Pramod Chunduri, Ray Liu, Yueying Cui, Savio Dsouza, Kinshuk Pahare, Tim Kraska, Santosh Chandrachood, Paul Meighan and Rick Sears.

A particular due to all of our companions who contributed to the launch of the Spark troubleshooting agent and the distant MCP service: Karthik Prabhakar, Suthan Phillips, Basheer Sheriff, Kamen Sharlandjiev, Archana Inapudi, Vara Bonthu, McCall Peltier, Lydia Kautsky, Larry Weber, Jason Berkovitz, Jordan Vaughn, Amar Wakharkar, Subramanya Vajiraya, Boyko Radulov and Ishan Gaur.

Concerning the authors