Because the rise of AI chatbots, Google’s Gemini has emerged as one of the vital highly effective gamers driving the evolution of clever methods. Past its conversational energy, Gemini additionally unlocks sensible potentialities in pc imaginative and prescient, enabling machines to see, interpret, and describe the world round them.

This information walks you thru the steps to leverage Google Gemini for pc imaginative and prescient, together with how one can arrange your setting, ship photos with directions, and interpret the mannequin’s outputs for object detection, caption era, and OCR. We’ll additionally contact on information annotation instruments (like these used with YOLO) to present context for customized coaching situations.

What’s Google Gemini?

Google Gemini is a household of AI fashions constructed to deal with a number of information sorts, resembling textual content, photos, audio, and code collectively. This implies they will course of duties that contain understanding each photos and phrases.

Gemini 2.5 Professional Options

- Multimodal Enter: It accepts mixtures of textual content and pictures in a single request.

- Reasoning: The mannequin can analyze data from the inputs to carry out duties like figuring out objects or describing scenes.

- Instruction Following: It responds to textual content directions (prompts) that information its evaluation of the picture.

These options permit builders to make use of Gemini for vision-related duties by an API with out coaching a separate mannequin for every job.

The Position of Knowledge Annotation: The YOLO Annotator

Whereas Gemini fashions present highly effective zero-shot or few-shot capabilities for these pc imaginative and prescient duties, constructing extremely specialised pc imaginative and prescient fashions requires coaching on a dataset tailor-made to the precise drawback. That is the place information annotation turns into important, notably for supervised studying duties like coaching a customized object detector.

The YOLO Annotator (typically referring to instruments suitable with the YOLO format, like Labeling, CVAT, or Roboflow) is designed to create labeled datasets.

What’s Knowledge Annotation?

For object detection, annotation includes drawing bounding packing containers round every object of curiosity in a picture and assigning a category label (e.g., ‘automobile’, ‘individual’, ‘canine’). This annotated information tells the mannequin what to search for and the place throughout coaching.

Key Options of Annotation Instruments (like YOLO Annotator)

- Consumer Interface: They supply graphical interfaces permitting customers to load photos, draw packing containers (or polygons, keypoints, and many others.), and assign labels effectively.

- Format Compatibility: Instruments designed for YOLO fashions save annotations in a selected textual content file format that YOLO coaching scripts anticipate (sometimes one .txt file per picture, containing class index and normalized bounding field coordinates).

- Effectivity Options: Many instruments embrace options like hotkeys, computerized saving, and generally model-assisted labeling to hurry up the customarily time-consuming annotation course of. Batch processing permits for more practical dealing with of enormous picture units.

- Integration: Utilizing commonplace codecs like YOLO ensures that the annotated information could be simply used with standard coaching frameworks, together with Ultralytics YOLO.

Whereas Google Gemini for Laptop Imaginative and prescient, can detect normal objects with out prior annotation, when you wanted a mannequin to detect very particular, customized objects (e.g., distinctive varieties of industrial gear, particular product defects), you’ll seemingly want to gather photos and annotate them utilizing a device like a YOLO annotator to coach a devoted YOLO mannequin.

Code Implementation – Google Gemini for Laptop Imaginative and prescient

First, it’s essential to set up the required software program libraries.

Step 1: Set up the Conditions

1. Set up Libraries

Run this command in your terminal:

!uv pip set up -U -q google-genai ultralyticsThis command installs the google-genai library to speak with the Gemini API and the ultralytics library, which accommodates useful capabilities for dealing with photos and drawing on them.

2. Import Modules

Add these traces to your Python Pocket book:

import json

import cv2

import ultralytics

from google import genai

from google.genai import sorts

from PIL import Picture

from ultralytics.utils.downloads import safe_download

from ultralytics.utils.plotting import Annotator, colours

ultralytics.checks()This code imports libraries for duties like studying photos (cv2, PIL), dealing with JSON information (json), interacting with the API (google.generativeai), and utility capabilities (ultralytics).

3. Configure API Key

Initialize the shopper utilizing your Google AI API key.

# Exchange "your_api_key" together with your precise key

# Use GenerativeModel for newer variations of the library

# Initialize the Gemini shopper together with your API key

shopper = genai.Consumer(api_key=”your_api_key”)This step prepares your script to ship authenticated requests.

Step 2: Perform to Work together with Gemini

Create a operate to ship requests to the mannequin. This operate takes a picture and a textual content immediate and returns the mannequin’s textual content output.

def inference(picture, immediate, temp=0.5):

"""

Performs inference utilizing Google Gemini 2.5 Professional Experimental mannequin.

Args:

picture (str or genai.sorts.Blob): The picture enter, both as a base64-encoded string or Blob object.

immediate (str): A textual content immediate to information the mannequin's response.

temp (float, non-compulsory): Sampling temperature for response randomness. Default is 0.5.

Returns:

str: The textual content response generated by the Gemini mannequin primarily based on the immediate and picture.

"""

response = shopper.fashions.generate_content(

mannequin="gemini-2.5-pro-exp-03-25",

contents=[prompt, image], # Present each the textual content immediate and picture as enter

config=sorts.GenerateContentConfig(

temperature=temp, # Controls creativity vs. determinism in output

),

)

return response.textual content # Return the generated textual responseRationalization

- This operate sends the picture and your textual content instruction (immediate) to the Gemini mannequin specified within the model_client.

- The temperature setting (temp) influences output randomness; decrease values give extra predictable outcomes.

Step 3: Getting ready Picture Knowledge

You should load photos appropriately earlier than sending them to the mannequin. This operate downloads a picture if wanted, reads it, converts the colour format, and returns a PIL Picture object and its dimensions.

def read_image(filename):

image_name = safe_download(filename)

# Learn picture with opencv

picture = cv2.cvtColor(cv2.imread(f"/content material/{image_name}"), cv2.COLOR_BGR2RGB)

# Extract width and peak

h, w = picture.form[:2]

# # Learn the picture utilizing OpenCV and convert it into the PIL format

return Picture.fromarray(picture), w, hRationalization

- This operate makes use of OpenCV (cv2) to learn the picture file.

- It converts the picture coloration order to RGB, which is commonplace.

- It returns the picture as a PIL object, appropriate for the inference operate, and its width and peak.

Step 4: Outcome formatting

def clean_results(outcomes):

"""Clear the outcomes for visualization."""

return outcomes.strip().removeprefix("```json").removesuffix("```").strip()This operate codecs the consequence into JSON format.

Activity 1: Object Detection

Gemini can discover objects in a picture and report their areas (bounding packing containers) primarily based in your textual content directions.

# Outline the textual content immediate

immediate = """

Detect the second bounding packing containers of objects in picture.

"""

# Mounted, plotting operate is determined by this.

output_prompt = "Return simply box_2d and labels, no further textual content."

picture, w, h = read_image("https://media-cldnry.s-nbcnews.com/picture/add/t_fit-1000w,f_auto,q_auto:greatest/newscms/2019_02/2706861/190107-messy-desk-stock-cs-910a.jpg") # Learn img, extract width, peak

outcomes = inference(picture, immediate + output_prompt) # Carry out inference

cln_results = json.masses(clean_results(outcomes)) # Clear outcomes, checklist convert

annotator = Annotator(picture) # initialize Ultralytics annotator

for idx, merchandise in enumerate(cln_results):

# By default, gemini mannequin return output with y coordinates first.

# Scale normalized field coordinates (0–1000) to picture dimensions

y1, x1, y2, x2 = merchandise["box_2d"] # bbox submit processing,

y1 = y1 / 1000 * h

x1 = x1 / 1000 * w

y2 = y2 / 1000 * h

x2 = x2 / 1000 * w

if x1 > x2:

x1, x2 = x2, x1 # Swap x-coordinates if wanted

if y1 > y2:

y1, y2 = y2, y1 # Swap y-coordinates if wanted

annotator.box_label([x1, y1, x2, y2], label=merchandise["label"], coloration=colours(idx, True))

Picture.fromarray(annotator.consequence()) # show the outputSupply Picture: Hyperlink

Output

Rationalization

- The immediate tells the mannequin what to search out and how one can format the output (JSON)

- It converts the normalized field coordinates (0-1000) to pixel coordinates utilizing the picture width (w) and peak (h).

- The Annotator device attracts the packing containers and labels on a replica of the picture

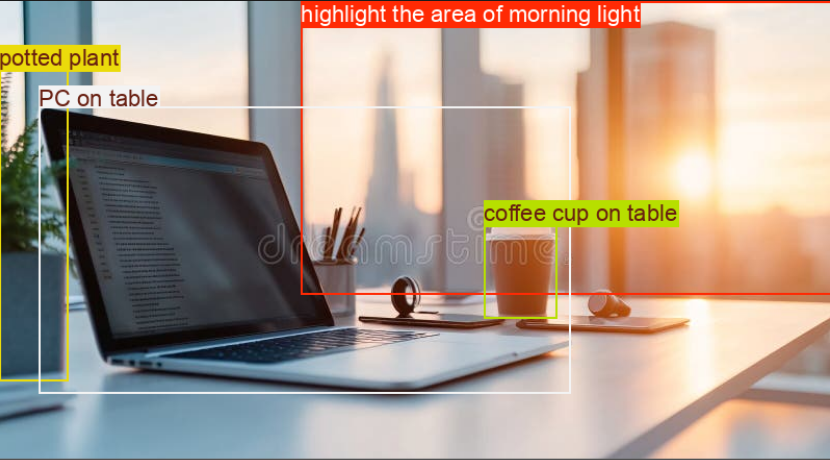

Activity 2: Testing Reasoning Capabilities

With Gemini fashions, you’ll be able to deal with complicated duties utilizing superior reasoning that understands context and delivers extra exact outcomes.

# Outline the textual content immediate

immediate = """

Detect the second bounding field round:

spotlight the realm of morning mild +

PC on desk

potted plant

espresso cup on desk

"""

# Mounted, plotting operate is determined by this.

output_prompt = "Return simply box_2d and labels, no further textual content."

picture, w, h = read_image("https://thumbs.dreamstime.com/b/modern-office-workspace-laptop-coffee-cup-cityscape-sunrise-sleek-desk-featuring-stationery-organized-neatly-city-345762953.jpg") # Learn picture and extract width, peak

outcomes = inference(picture, immediate + output_prompt)

# Clear the outcomes and cargo ends in checklist format

cln_results = json.masses(clean_results(outcomes))

annotator = Annotator(picture) # initialize Ultralytics annotator

for idx, merchandise in enumerate(cln_results):

# By default, gemini mannequin return output with y coordinates first.

# Scale normalized field coordinates (0–1000) to picture dimensions

y1, x1, y2, x2 = merchandise["box_2d"] # bbox submit processing,

y1 = y1 / 1000 * h

x1 = x1 / 1000 * w

y2 = y2 / 1000 * h

x2 = x2 / 1000 * w

if x1 > x2:

x1, x2 = x2, x1 # Swap x-coordinates if wanted

if y1 > y2:

y1, y2 = y2, y1 # Swap y-coordinates if wanted

annotator.box_label([x1, y1, x2, y2], label=merchandise["label"], coloration=colours(idx, True))

Picture.fromarray(annotator.consequence()) # show the outputSupply Picture: Hyperlink

Output

Rationalization

- This code block accommodates a posh immediate to check the mannequin’s reasoning capabilities.

- It converts the normalized field coordinates (0-1000) to pixel coordinates utilizing the picture width (w) and peak (h).

- The Annotator device attracts the packing containers and labels on a replica of the picture.

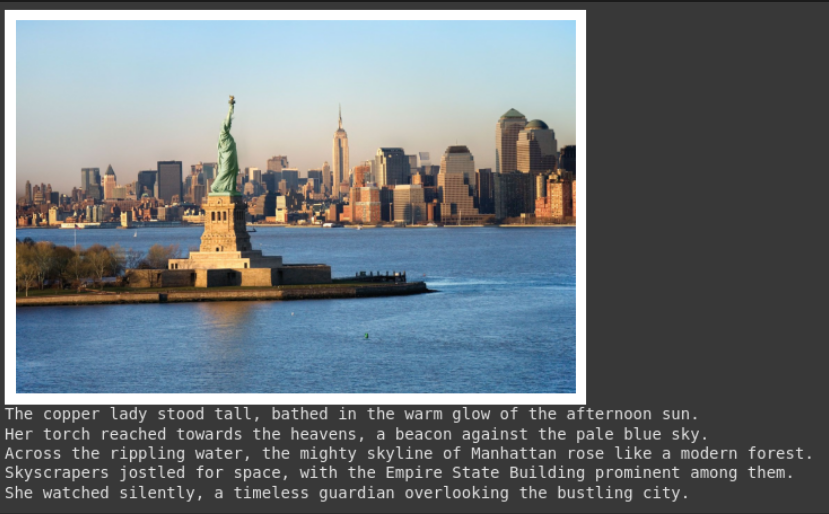

Activity 3: Picture Captioning

Gemini can create textual content descriptions for a picture.

# Outline the textual content immediate

immediate = """

What's contained in the picture, generate an in depth captioning within the type of brief

story, Make 4-5 traces and begin every sentence on a brand new line.

"""

picture, _, _ = read_image("https://cdn.britannica.com/61/93061-050-99147DCE/Statue-of-Liberty-Island-New-York-Bay.jpg") # Learn picture and extract width, peak

plt.imshow(picture)

plt.axis('off') # Conceal axes

plt.present()

print(inference(picture, immediate)) # Show the outcomesSupply Picture: Hyperlink

Output

Rationalization

- This immediate asks for a selected type of description (narrative, 4 traces, new traces).

- The supplied picture is proven within the output.

- The operate returns the generated textual content. That is helpful for creating alt textual content or summaries.

Activity 4: Optical Character Recognition (OCR)

Gemini can learn textual content inside a picture and inform you the place it discovered the textual content.

# Outline the textual content immediate

immediate = """

Extract the textual content from the picture

"""

# Mounted, plotting operate is determined by this.

output_prompt = """

Return simply box_2d which shall be location of detected textual content areas + label"""

picture, w, h = read_image("https://cdn.mos.cms.futurecdn.internet/4sUeciYBZHaLoMa5KiYw7h-1200-80.jpg") # Learn picture and extract width, peak

outcomes = inference(picture, immediate + output_prompt)

# Clear the outcomes and cargo ends in checklist format

cln_results = json.masses(clean_results(outcomes))

print()

annotator = Annotator(picture) # initialize Ultralytics annotator

for idx, merchandise in enumerate(cln_results):

# By default, gemini mannequin return output with y coordinates first.

# Scale normalized field coordinates (0–1000) to picture dimensions

y1, x1, y2, x2 = merchandise["box_2d"] # bbox submit processing,

y1 = y1 / 1000 * h

x1 = x1 / 1000 * w

y2 = y2 / 1000 * h

x2 = x2 / 1000 * w

if x1 > x2:

x1, x2 = x2, x1 # Swap x-coordinates if wanted

if y1 > y2:

y1, y2 = y2, y1 # Swap y-coordinates if wanted

annotator.box_label([x1, y1, x2, y2], label=merchandise["label"], coloration=colours(idx, True))

Picture.fromarray(annotator.consequence()) # show the outputSupply Picture: Hyperlink

Output

Rationalization

- This makes use of a immediate just like object detection however asks for textual content (label) as a substitute of object names.

- The code extracts the textual content and its location, printing the textual content and drawing packing containers on the picture.

- That is helpful for digitizing paperwork or studying textual content from indicators or labels in photographs.

Conclusion

Google Gemini for Laptop Imaginative and prescient makes it straightforward to deal with duties like object detection, picture captioning, and OCR by easy API calls. By sending photos together with clear textual content directions, you’ll be able to information the mannequin’s understanding and get usable, real-time outcomes.

That stated, whereas Gemini is nice for general-purpose duties or fast experiments, it’s not at all times the most effective match for extremely specialised use circumstances. Suppose you’re working with area of interest objects or want tighter management over accuracy. In that case, the normal route nonetheless holds robust: accumulate your dataset, annotate it with instruments like YOLO labelers, and prepare a customized mannequin tuned on your wants.

Login to proceed studying and revel in expert-curated content material.