You’re not brief on instruments. Or fashions. Or frameworks.

What you’re brief on is a principled means to make use of them — at scale.

Constructing efficient generative AI workflows, particularly agentic ones, means navigating a combinatorial explosion of decisions.

Each new retriever, immediate technique, textual content splitter, embedding mannequin, or synthesizing LLM multiplies the house of attainable workflows, leading to a search house with over 10²³ attainable configurations.

Trial-and-error doesn’t scale. And model-level benchmarks don’t mirror how elements behave when stitched into full methods.

That’s why we constructed syftr — an open supply framework for routinely figuring out Pareto-optimal workflows throughout accuracy, value, and latency constraints.

The complexity behind generative AI workflows

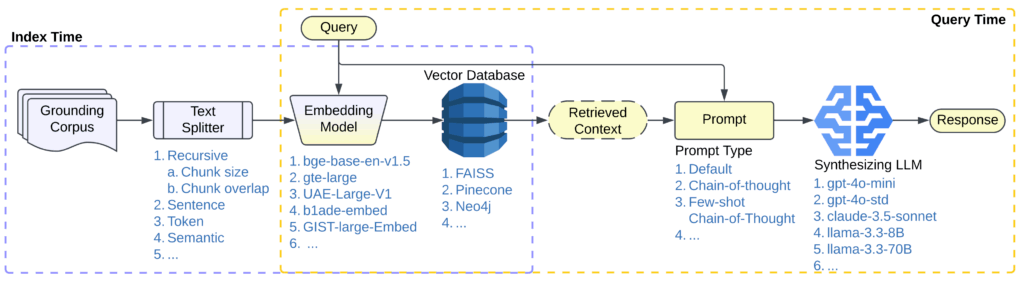

For example how rapidly complexity compounds, contemplate even a comparatively easy RAG pipeline just like the one proven in Determine 1.

Every part—retriever, immediate technique, embedding mannequin, textual content splitter, synthesizing LLM—requires cautious choice and tuning. And past these choices, there’s an increasing panorama of end-to-end workflow methods, from single-agent workflows like ReAct and LATS to multi-agent workflows like CaptainAgent and Magentic-One.

What’s lacking is a scalable, principled approach to discover this configuration house.

That’s the place syftr is available in.

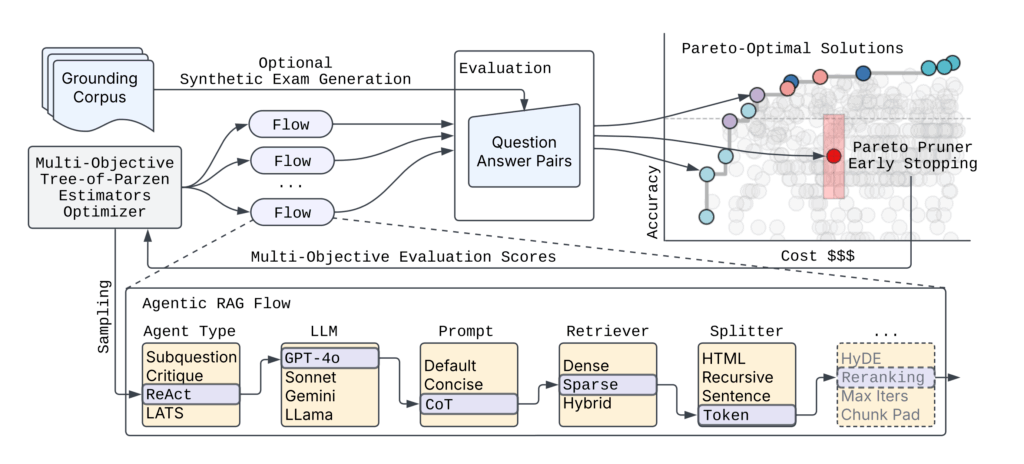

Its open supply framework makes use of multi-objective Bayesian Optimization to effectively seek for Pareto-optimal RAG workflows, balancing value, accuracy, and latency throughout configurations that may be inconceivable to check manually.

Benchmarking Pareto-optimal workflows with syftr

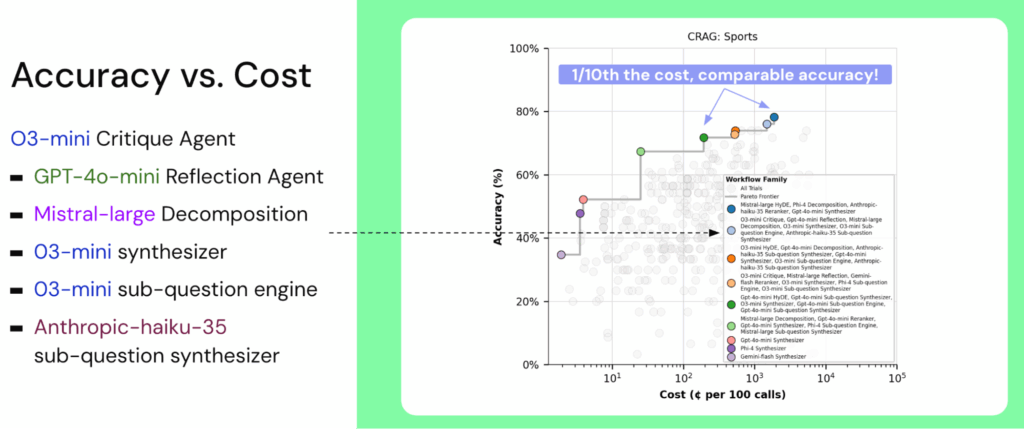

As soon as syftr is utilized to a workflow configuration house, it surfaces candidate pipelines that obtain robust tradeoffs throughout key efficiency metrics.

The instance under exhibits syftr’s output on the CRAG (Complete RAG) Sports activities benchmark, highlighting workflows that keep excessive accuracy whereas considerably lowering value.

Whereas Determine 2 exhibits what syftr can ship, it’s equally essential to know how these outcomes are achieved.

On the core of syftr is a multi-objective search course of designed to effectively navigate huge workflow configuration areas. The framework prioritizes each efficiency and computational effectivity – important necessities for real-world experimentation at scale.

Since evaluating each workflow on this house isn’t possible, we sometimes consider round 500 workflows per run.

To make this course of much more environment friendly, syftr features a novel early stopping mechanism — Pareto Pruner — which halts analysis of workflows which are unlikely to enhance the Pareto frontier. This considerably reduces computational value and search time whereas preserving consequence high quality.

Why present benchmarks aren’t sufficient

Whereas mannequin benchmarks, like MMLU, LiveBench, Chatbot Enviornment, and the Berkeley Operate-Calling Leaderboard, have superior our understanding of remoted mannequin capabilities, basis fashions hardly ever function alone in real-world manufacturing environments.

As a substitute, they’re sometimes one part — albeit a vital one — inside bigger, subtle AI methods.

Measuring intrinsic mannequin efficiency is important, nevertheless it leaves open important system-level questions:

- How do you assemble a workflow that meets task-specific targets for accuracy, latency, and value?

- Which fashions must you use—and by which components of the pipeline?

syftr addresses this hole by enabling automated, multi-objective analysis throughout total workflows.

It captures nuanced tradeoffs that emerge solely when elements work together inside a broader pipeline, and systematically explores configuration areas which are in any other case impractical to judge manually.

syftr is the primary open-source framework particularly designed to routinely establish Pareto-optimal generative AI workflows that stability a number of competing targets concurrently — not simply accuracy, however latency and value as properly.

It attracts inspiration from present analysis, together with:

- AutoRAG, which focuses solely on optimizing for accuracy

- Kapoor et al. ‘s work, AI Brokers That Matter, which emphasizes cost-controlled analysis to forestall incentivizing overly expensive, leaderboard-focused brokers. This precept serves as one in every of our core analysis inspirations.

Importantly, syftr can be orthogonal to LLM-as-optimizer frameworks like Hint and TextGrad, and generic circulation optimizers like DSPy. Such frameworks could be mixed with syftr to additional optimize prompts in workflows.

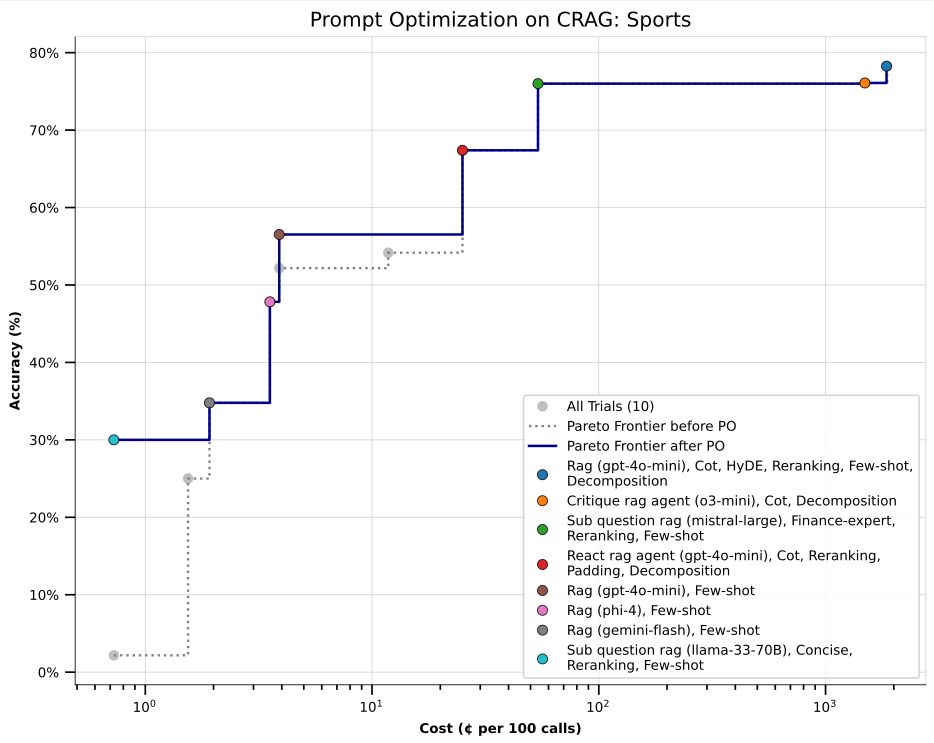

In early experiments, syftr first recognized Pareto-optimal workflows on the CRAG Sports activities benchmark.

We then utilized Hint to optimize prompts throughout all of these configurations — taking a two-stage method: multi-objective workflow search adopted by fine-grained immediate tuning.

The consequence: notable accuracy enhancements, particularly in low-cost workflows that originally exhibited decrease accuracy (these clustered within the lower-left of the Pareto frontier). These positive factors recommend that post-hoc immediate optimization can meaningfully increase efficiency, even in extremely cost-constrained settings.

This two-stage method — first multi-objective configuration search, then immediate refinement — highlights the advantages of mixing syftr with specialised downstream instruments, enabling modular and versatile workflow optimization methods.

Constructing and increasing syftr’s search house

Syftr cleanly separates the workflow search house from the underlying optimization algorithm. This modular design allows customers to simply lengthen or customise the house, including or eradicating flows, fashions, and elements by enhancing configuration information.

The default implementation makes use of Multi-Goal Tree-of-Parzen-Estimators (MOTPE), however syftr helps swapping in different optimization methods.

Contributions of recent flows, modules, or algorithms are welcomed by way of pull request at github.com/datarobot/syftr.

Constructed on the shoulders of open supply

syftr builds on numerous highly effective open supply libraries and frameworks:

- Ray for distributing and scaling search over giant clusters of CPUs and GPUs

- Ray Serve for autoscaling mannequin internet hosting

- Optuna for its versatile define-by-run interface (much like PyTorch’s keen execution) and help for state-of-the-art multi-objective optimization algorithms

- LlamaIndex for constructing subtle agentic and non-agentic RAG workflows

- HuggingFace Datasets for quick, collaborative, and uniform dataset interface

- Hint for optimizing textual elements inside workflows, similar to prompts

syftr is framework-agnostic: workflows could be constructed utilizing any orchestration library or modeling stack. This flexibility permits customers to increase or adapt syftr to suit all kinds of tooling preferences.

Case examine: syftr on CRAG Sports activities

Benchmark setup

The CRAG benchmark dataset was launched by Meta for the KDD Cup 2024 and consists of three duties:

- Activity 1: Retrieval summarization

- Activity 2: Data graph and internet retrieval

- Activity 3: Finish-to-end RAG

syftr was evaluated on Activity 3 (CRAG3), which incorporates 4,400 QA pairs spanning a variety of matters. The official benchmark performs RAG over 50 webpages retrieved for every query.

To extend problem, we mixed all webpages throughout all questions right into a single corpus, making a extra life like, difficult retrieval setting.

Notice: Amazon Q pricing makes use of a per-user/month pricing mannequin, which differs from the per-query token-based value estimates used for syftr workflows.

Key observations and insights

Throughout datasets, syftr persistently surfaces significant optimization patterns:

- Non-agentic workflows dominate the Pareto frontier. They’re sooner and cheaper, main the optimizer to favor these configurations extra continuously than agentic ones.

- GPT-4o-mini continuously seems in Pareto-optimal flows, suggesting it provides a robust stability of high quality and value as a synthesizing LLM.

- Reasoning fashions like o3-mini carry out properly on quantitative duties (e.g., FinanceBench, InfiniteBench), possible as a consequence of their multi-hop reasoning capabilities.

- Pareto frontiers ultimately flatten after an preliminary rise, with diminishing returns in accuracy relative to steep value will increase, underscoring the necessity for instruments like syftr that assist pinpoint environment friendly working factors.

We routinely discover that the workflow on the knee level of the Pareto frontier loses just some share factors in accuracy in comparison with probably the most correct setup — whereas being 10x cheaper.

syftr makes it simple to search out that candy spot.

Price of operating syftr

In our experiments, we allotted a finances of ~500 workflow evaluations per activity. Though precise prices fluctuate primarily based on the dataset and search house complexity, we persistently recognized robust Pareto frontiers with a one-time search value of roughly $500 per use case.

We anticipate this value to lower as extra environment friendly search algorithms and house definitions are developed.

Importantly, this preliminary funding is minimal relative to the long-term positive factors from deploying optimized workflows, whether or not by means of decreased compute utilization, improved accuracy, or higher consumer expertise in high-traffic methods.

For detailed outcomes throughout six benchmark duties, together with datasets past CRAG, seek advice from the full syftr paper.

Getting began and contributing

To get began with syftr, clone or fork the repository on GitHub. Benchmark datasets can be found on HuggingFace, and syftr additionally helps user-defined datasets for customized experimentation.

The present search house consists of:

- 9 proprietary LLMs

- 11 embedding fashions

- 4 basic immediate methods

- 3 retrievers

- 4 textual content splitters (with parameter configurations)

- 4 agentic RAG flows and 1 non-agentic RAG circulation, every with related hierarchical hyperparameters

New elements, similar to fashions, flows, or search modules, could be added or modified by way of configuration information. Detailed walkthroughs can be found to help customization.

syftr is developed totally within the open. We welcome contributions by way of pull requests, characteristic proposals, and benchmark stories. We’re significantly fascinated with concepts that advance the analysis path or enhance the framework’s extensibility.

What’s forward for syftr

syftr remains to be evolving, with a number of energetic areas of analysis designed to increase its capabilities and sensible impression:

- Meta-learning

At present, every search is carried out from scratch. We’re exploring meta-learning strategies that leverage prior runs throughout comparable duties to speed up and information future searches. - Multi-agent workflow analysis

Whereas multi-agent methods are gaining traction, they introduce further complexity and value. We’re investigating how these workflows examine to single-agent and non-agentic pipelines, and when their tradeoffs are justified. - Composability with immediate optimization frameworks

syftr is complementary to instruments like DSPy, Hint, and TextGrad, which optimize textual elements inside workflows. We’re exploring methods to extra deeply combine these methods to collectively optimize construction and language. - Extra agentic duties

We began with question-answer duties, a important manufacturing use case for brokers. Subsequent, we plan to quickly broaden syftr’s activity repertoire to code technology, information evaluation, and interpretation. We additionally invite the neighborhood to recommend further duties for syftr to prioritize.

As these efforts progress, we goal to broaden syftr’s worth as a analysis software, a benchmarking framework, and a sensible assistant for system-level generative AI design.

Should you’re working on this house, we welcome your suggestions, concepts, and contributions.

Attempt the code, learn the analysis

To discover syftr additional, try the GitHub repository or learn the complete paper on ArXiv for particulars on methodology and outcomes.

Syftr has been accepted to seem on the Worldwide Convention on Automated Machine Studying (AutoML) in September, 2025 in New York Metropolis.

We stay up for seeing what you construct and discovering what’s subsequent, collectively.