On this publish, we present you methods to implement real-time information ingestion from a number of Kafka subjects to Apache Hudi tables utilizing Amazon EMR. This answer streamlines information ingestion by processing a number of Amazon Managed Streaming for Apache Kafka (Amazon MSK) subjects in parallel whereas offering information high quality and scalability via change information seize (CDC) and Apache Hudi.

Organizations processing real-time information modifications throughout a number of sources usually wrestle with sustaining information consistency and managing useful resource prices. Conventional batch processing requires reprocessing whole datasets, resulting in excessive useful resource utilization and delayed analytics. By implementing CDC with Apache Hudi’s MultiTable DeltaStreamer, you’ll be able to obtain real-time updates; environment friendly incremental processing with atomicity, consistency, isolation, sturdiness (ACID) ensures; and seamless schema evolution whereas minimizing storage and compute prices.

Utilizing Amazon Easy Storage Service (Amazon S3), Amazon CloudWatch, Amazon EMR, Amazon MSK and AWS Glue Knowledge Catalog, you’ll construct a production-ready information pipeline that processes modifications from a number of information sources concurrently. Via this tutorial, you’ll be taught to configure CDC pipelines, handle table-specific configurations, implement 15-minute sync intervals, and keep your streaming pipeline. The outcome is a sturdy system that maintains information consistency whereas enabling real-time analytics and environment friendly useful resource utilization.

What’s CDC?

Think about a continuously evolving information stream, a river of knowledge the place updates move repeatedly. CDC acts like a classy internet, capturing solely the modifications—the inserts, updates, and deletes—taking place inside that information stream. Via this focused strategy, you’ll be able to give attention to the brand new and altered information, considerably bettering the effectivity of your information pipelines.There are quite a few benefits to embracing CDC:

- Lowered processing time – Why reprocess all the dataset when you’ll be able to focus solely on the updates? CDC minimizes processing overhead, saving invaluable time and sources.

- Actual-time insights – With CDC, your information pipelines change into extra responsive. You possibly can react to modifications virtually instantaneously, enabling real-time analytics and decision-making.

- Simplified information pipelines – Conventional batch processing can result in complicated pipelines. CDC streamlines the method, making information pipelines extra manageable and simpler to keep up.

Why Apache Hudi?

Hudi simplifies incremental information processing and information pipeline growth. This framework effectively manages enterprise necessities equivalent to information lifecycle and improves information high quality. You need to use Hudi to handle information on the record-level in Amazon S3 information lakes to simplify CDC and streaming information ingestion and deal with information privateness use instances requiring record-level updates and deletes. Datasets managed by Hudi are saved in Amazon S3 utilizing open storage codecs, whereas integrations with Presto, Apache Hive, Apache Spark, and Knowledge Catalog offer you close to actual time entry to up to date information. Apache Hudi facilitates incremental information processing for Amazon S3 by:

- Managing record-level modifications – Perfect for replace and delete use instances

- Open codecs – Integrates with Presto, Hive, Spark, and Knowledge Catalog

- Schema evolution – Helps dynamic schema modifications

- HoodieMultiTableDeltaStreamer – Simplifies ingestion into a number of tables utilizing centralized configurations

Hudi MultiTable Delta Streamer

The HoodieMultiTableStreamer affords a streamlined strategy to information ingestion from a number of sources into Hudi tables. By processing a number of sources concurrently via a single DeltaStreamer job, it eliminates the necessity for separate pipelines whereas lowering operational complexity. The framework supplies versatile configuration choices, and you’ll tailor settings for numerous codecs and schemas throughout totally different information sources.

One among its key strengths lies in unified information supply, organizing info in respective Hudi tables for seamless entry. The system’s clever upsert capabilities effectively deal with each inserts and updates, sustaining information consistency throughout your pipeline. Moreover, its strong schema evolution assist allows your information pipeline to adapt to altering enterprise necessities with out disruption, making it a perfect answer for dynamic information environments.

Resolution overview

On this part, we present methods to stream information to Apache Hudi Desk utilizing Amazon MSK. For this instance situation, there are information streams from three distinct sources residing in separate Kafka subjects. We goal to implement a streaming pipeline that makes use of the Hudi DeltaStreamer with multitable assist to ingest and course of this information at 15-minute intervals.

Mechanism

Utilizing MSK Join, information from a number of sources flows into MSK subjects. These subjects are then ingested into Hudi tables utilizing the Hudi MultiTable DeltaStreamer. On this pattern implementation, we create three Amazon MSK subjects and configure the pipeline to course of information in JSON format utilizing JsonKafkaSource, with the flexibleness to deal with Avro format when wanted via the suitable deserializer configuration

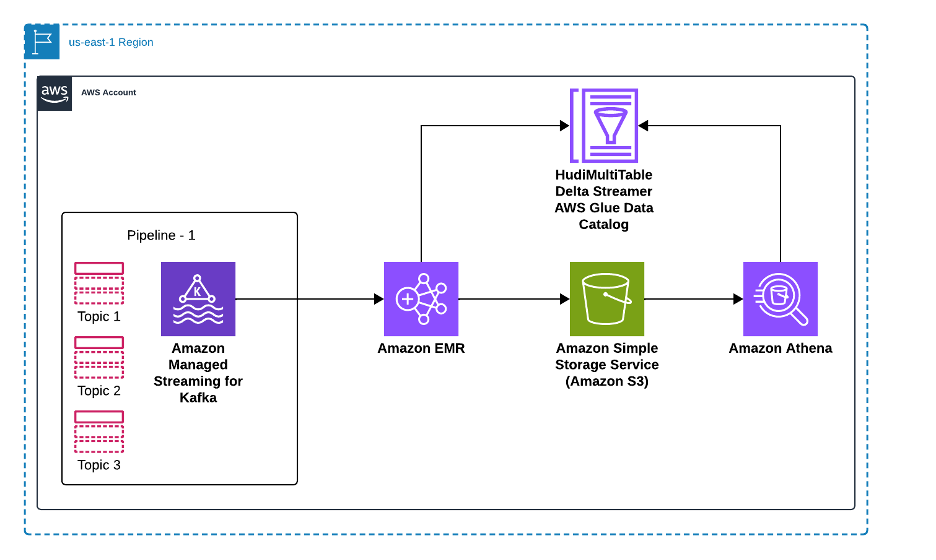

The next diagram illustrates how our answer processes information from a number of supply databases via Amazon MSK and Apache Hudi to allow analytics in Amazon Athena. Supply databases ship their information modifications—together with inserts, updates, and deletes—to devoted subjects in Amazon MSK, the place every information supply maintains its personal Kafka subject for change occasions. An Amazon EMR cluster runs the Apache Hudi MultiTable DeltaStreamer, which processes these a number of Kafka subjects in parallel, remodeling the information and writing it to Apache Hudi tables saved in Amazon S3. Knowledge Catalog maintains the metadata for these tables, enabling seamless integration with analytics instruments. Lastly, Amazon Athena supplies SQL question capabilities on the Hudi tables, permitting analysts to run each snapshot and incremental queries on the newest information. This structure scales horizontally as new information sources are added, with every supply getting its devoted Kafka subject and Hudi desk configuration, whereas sustaining information consistency and ACID ensures throughout all the pipeline.

To arrange the answer, it is advisable to full the next high-level steps:

- Arrange Amazon MSK and create Kafka subjects

- Create the Kafka subjects

- Create table-specific configurations

- Launch Amazon EMR cluster

- Invoke the Hudi MultiTable DeltaStreamer

- Confirm and question information

Conditions

To carry out the answer, it is advisable to have the next stipulations. For AWS providers and permissions, you want:

- AWS account:

- IAM roles:

- Amazon EMR service position (EMR_DefaultRole) with permissions for Amazon S3, AWS Glue and CloudWatch.

- Amazon EC2 occasion profile (EMR_EC2_DefaultRole) with S3 learn/write entry.

- Amazon MSK entry position with applicable permissions.

- S3 buckets:

- Configuration bucket for storing properties information and schemas.

- Output bucket for Hudi tables.

- Logging bucket (elective however advisable).

- Community configuration:

- Improvement instruments:

Arrange Amazon MSK and create Kafka subjects

On this step, you’ll create an MSK cluster and configure the required Kafka subjects to your information streams.

- To create an MSK cluster:

- Confirm the cluster standing:

aws kafka describe-cluster --cluster-arn $CLUSTER_ARN | jq '.ClusterInfo.State'

The command ought to return ACTIVE when the cluster is prepared.

Schema setup

To arrange the schema, full the next steps:

- Create your schema information.

input_schema.avsc:output_schema.avsc:

- Create and add schemas to your S3 bucket:

Create the Kafka subjects

To create the Kafka subjects, full the next steps:

- Get the bootstrap dealer string:

- Create the required subjects:

Configure Apache Hudi

The Hudi MultiTable DeltaStreamer configuration is split into two main parts to streamline and standardize information ingestion:

- Frequent configurations – These settings apply throughout all tables and outline the shared properties for ingestion. They embody particulars equivalent to shuffle parallelism, Kafka brokers, and customary ingestion configurations for all subjects.

- Desk-specific configurations – Every desk has distinctive necessities, such because the document key, schema file paths, and subject names. These configurations tailor every desk’s ingestion course of to its schema and information construction.

Create frequent configuration file

Frequent Config: kafka-hudi config file the place we specify kafka dealer and customary configuration for all subjects as beneath

Create the kafka-hudi-deltastreamer.properties file with the next properties:

Create table-specific configurations

For every subject, create its personal configuration with a subject identify and first key particulars. Full the next steps:

cust_sales_details.properties:cust_sales_appointment.properties:cust_info.properties:

These configurations type the spine of Hudi’s ingestion pipeline, enabling environment friendly information dealing with and sustaining real-time consistency. Schema configurations outline the construction of each supply and goal information, sustaining seamless information transformation and ingestion. Operational settings management how information is uniquely recognized, up to date, and processed incrementally.

The next are essential particulars for organising Hudi ingestion pipelines:

hoodie.deltastreamer.schemaprovider.supply.schema.file– The schema of the supply documenthoodie.deltastreamer.schemaprovider.goal.schema.file– The schema for the goal documenthoodie.deltastreamer.supply.kafka.subject– The supply MSK subject identifybootstap.servers– The Amazon MSK bootstrap server’s personal endpointauto.offset.reset– The buyer’s habits when there is no such thing as a dedicated place or when an offset is out of vary

Key operational fields to attain in-place updates for the generated schema embody:

hoodie.datasource.write.recordkey.subject– The document key subject. That is the distinctive identifier of a document in Hudi.hoodie.datasource.write.precombine.subject– When two data have the identical document key worth, Apache Hudi picks the one with the biggest worth for the pre-combined subject.hoodie.datasource.write.operation– The operation on the Hudi dataset. Attainable values embodyUPSERT,INSERT, andBULK_INSERT.

Launch Amazon EMR cluster

This step creates an EMR cluster with Apache Hudi put in. The cluster will run the MultiTable DeltaStreamer to course of information out of your Kafka subjects. To create the EMR cluster, enter the next:

Invoke the Hudi MultiTable DeltaStreamer

This step configures and begins the DeltaStreamer job that may repeatedly course of information out of your Kafka subjects into Hudi tables. Full the next steps:

- Hook up with the Amazon EMR grasp node:

- Execute the DeltaStreamer job:

For steady mode, it is advisable to add the next property:

With the job configured and working on Amazon EMR, the Hudi MultiTable DeltaStreamer effectively manages real-time information ingestion into your Amazon S3 information lake.

Confirm and question information

To confirm and question the information, full the next steps:

- Register tables in Knowledge Catalog:

- Question with Athena:

You need to use Amazon CloudWatch alarms to provide you with a warning of points with the EMR job or information processing. To create a CloudWatch alarm to watch EMR job failures, enter the next:

Actual-world influence of Hudi CDC pipelines

With the pipeline configured and working, you’ll be able to obtain real-time updates to your information lake, enabling sooner analytics and decision-making. As an example:

- Analytics – Up-to-date stock information maintains correct dashboards for ecommerce platforms.

- Monitoring – CloudWatch metrics verify the pipeline’s well being and effectivity.

- Flexibility – The seamless dealing with of schema evolution minimizes downtime and information inconsistencies.

Cleanup

To keep away from incurring future costs, observe these steps to wash up sources:

Conclusion

On this publish, we confirmed how one can construct a scalable information ingestion pipeline utilizing Apache Hudi’s MultiTable DeltaStreamer on Amazon EMR to course of information from a number of Amazon MSK subjects. You discovered methods to configure CDC with Apache Hudi, arrange real-time information processing with 15-minute sync intervals, and keep information consistency throughout a number of sources in your Amazon S3 information lake.

To be taught extra, discover these sources:

By combining CDC with Apache Hudi, you’ll be able to construct environment friendly, real-time information pipelines. The streamlined ingestion processes simplify administration, improve scalability, and keep information high quality, making this strategy a cornerstone of recent information architectures.

In regards to the authors