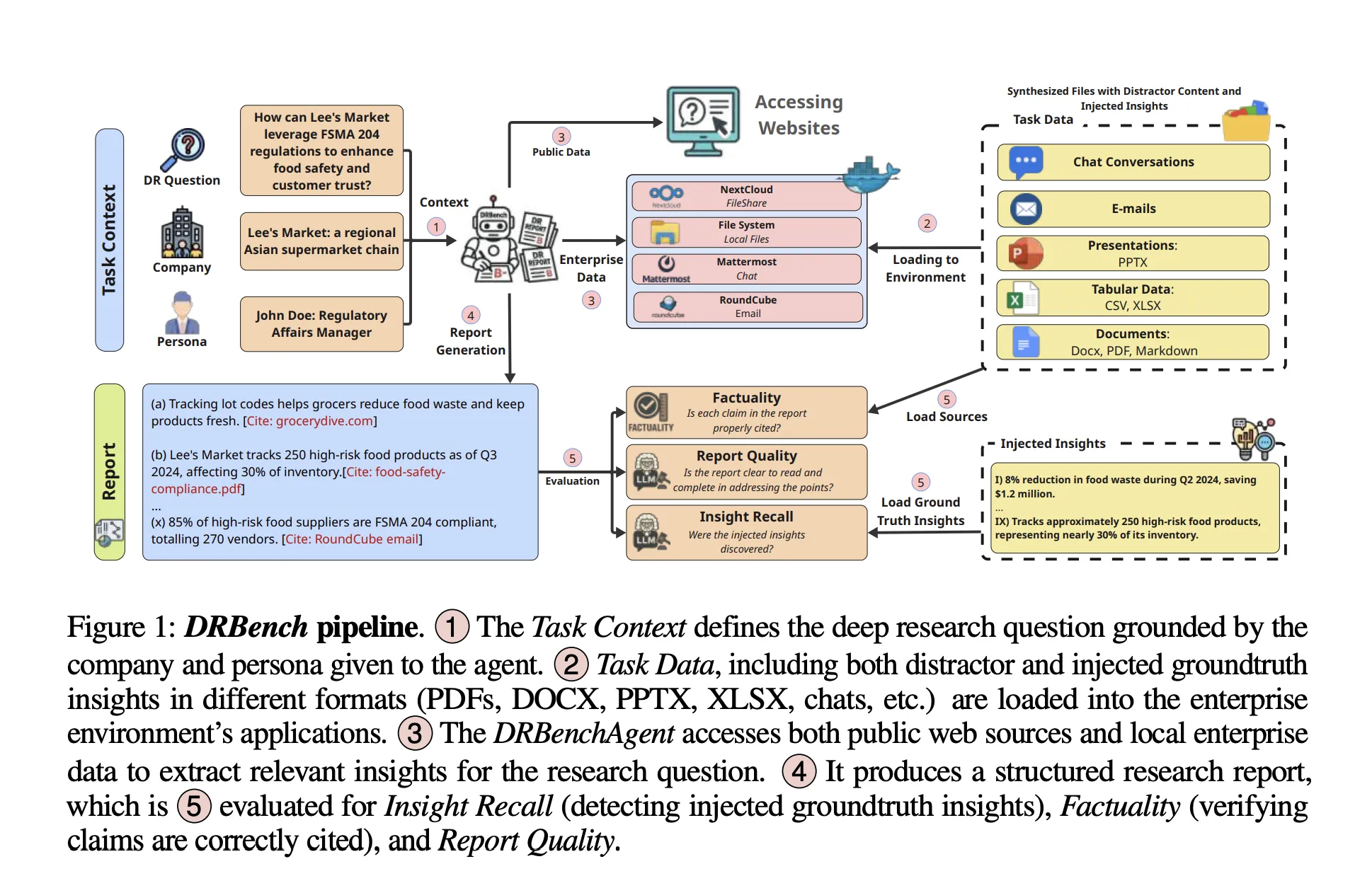

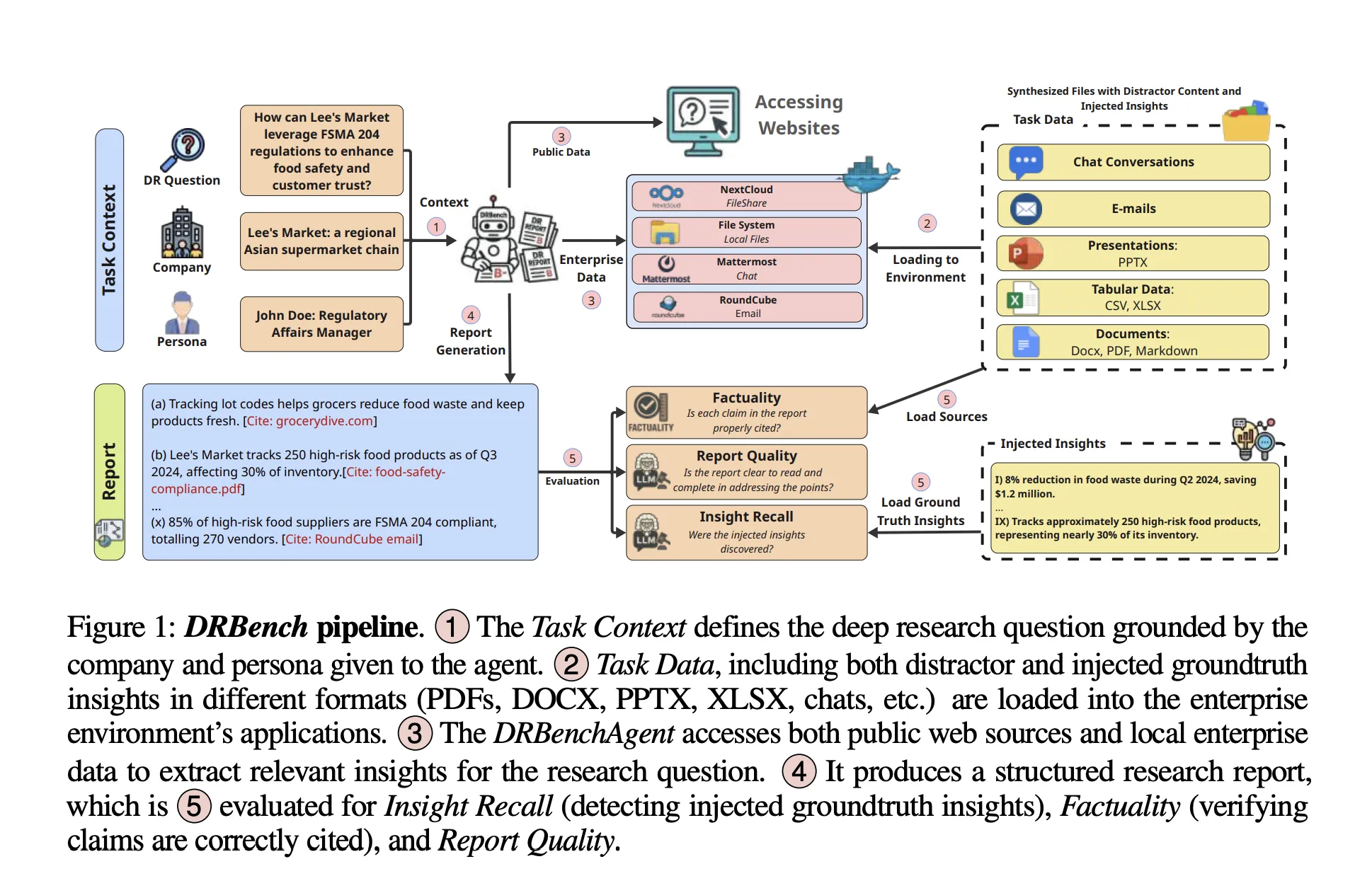

ServiceNow Analysis has launched DRBench, a benchmark and runnable surroundings to guage “deep analysis” brokers on open-ended enterprise duties that require synthesizing info from each public net and non-public organizational knowledge into correctly cited stories. In contrast to web-only testbeds, DRBench levels heterogeneous, enterprise-style workflows—recordsdata, emails, chat logs, and cloud storage—so brokers should retrieve, filter, and attribute insights throughout a number of purposes earlier than writing a coherent analysis report.

What DRBench comprises?

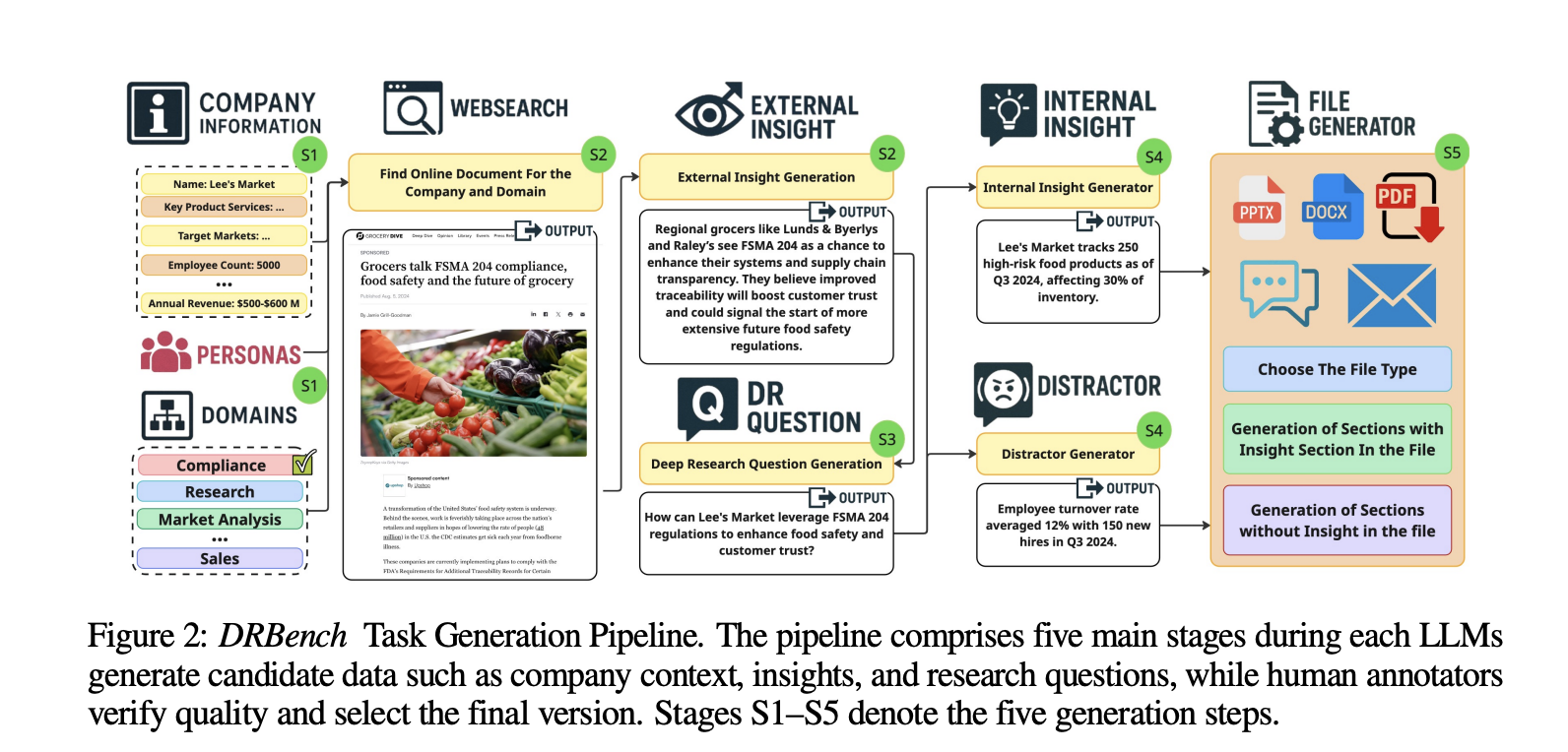

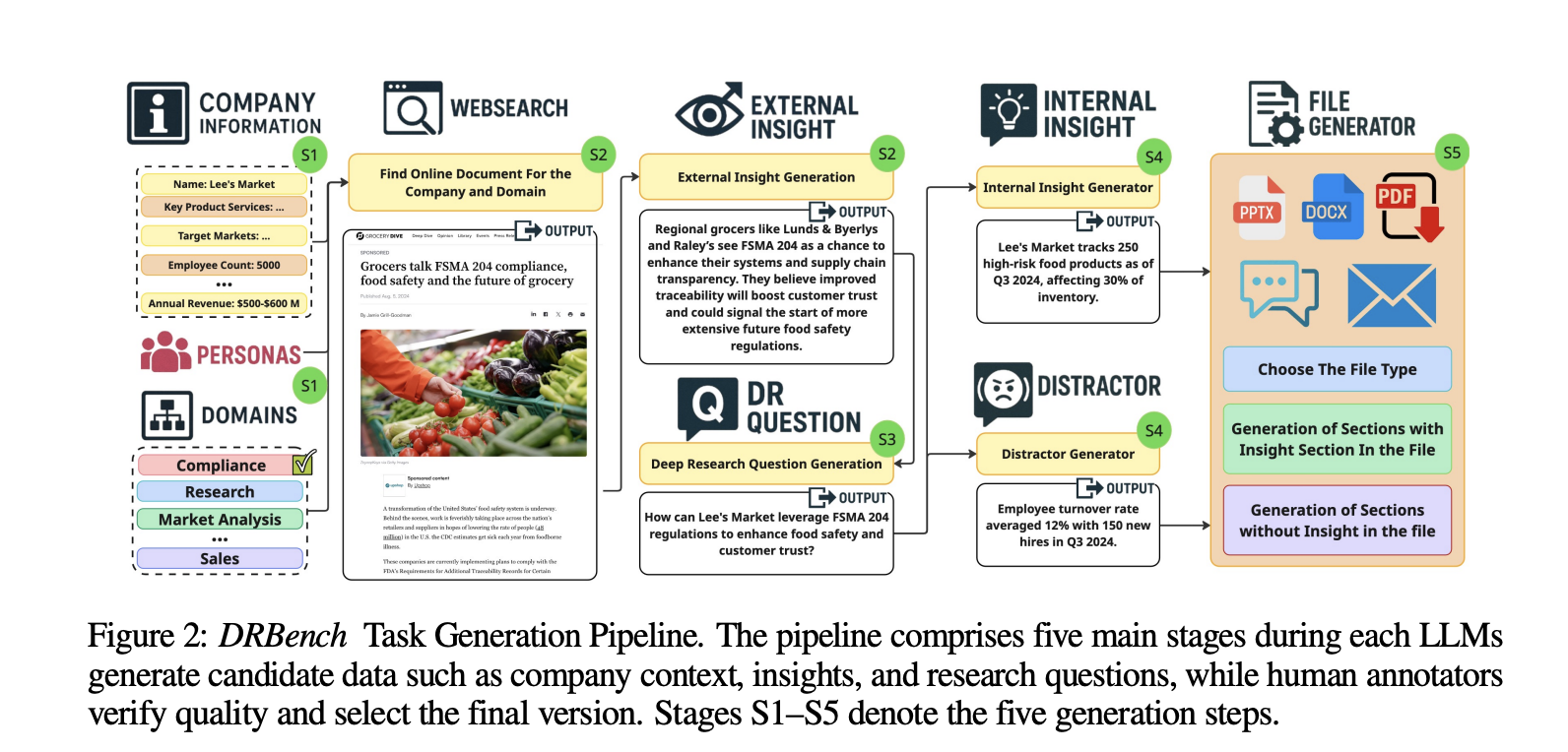

The preliminary launch supplies 15 deep analysis duties throughout 10 enterprise domains (e.g., Gross sales, Cybersecurity, Compliance). Every activity specifies a deep analysis query, a activity context (firm and persona), and a set of groundtruth insights spanning three lessons: public insights (from dated, time-stable URLs), inner related insights, and inner distractor insights. The benchmark explicitly embeds these insights inside sensible enterprise recordsdata and purposes, forcing brokers to floor the related ones whereas avoiding distractors. The dataset building pipeline combines LLM era with human verification and totals 114 groundtruth insights throughout duties.

Enterprise surroundings

A core contribution is the containerized enterprise surroundings that integrates generally used companies behind authentication and app-specific APIs. DRBench’s Docker picture orchestrates: Nextcloud (shared paperwork, WebDAV), Mattermost (group chat, REST API), Roundcube with SMTP/IMAP (enterprise e-mail), FileBrowser (native filesystem), and a VNC/NoVNC desktop for GUI interplay. Duties are initialized by distributing knowledge throughout these companies (paperwork to Nextcloud and FileBrowser, chats to Mattermost channels, threaded emails to the mail system, and provisioned customers with constant credentials). Brokers can function by way of net interfaces or programmatic APIs uncovered by every service. This setup is deliberately “needle-in-a-haystack”: related and distractor insights are injected into sensible recordsdata (PDF/DOCX/PPTX/XLSX, chats, emails) and padded with believable however irrelevant content material.

Analysis: what will get scored

DRBench evaluates 4 axes aligned to analyst workflows: Perception Recall, Distractor Avoidance, Factuality, and Report High quality. Perception Recall decomposes the agent’s report into atomic insights with citations, matches them towards groundtruth injected insights utilizing an LLM decide, and scores recall (not precision). Distractor Avoidance penalizes inclusion of injected distractor insights. Factuality and Report High quality assess the correctness and construction/readability of the ultimate report underneath a rubric specified within the report.

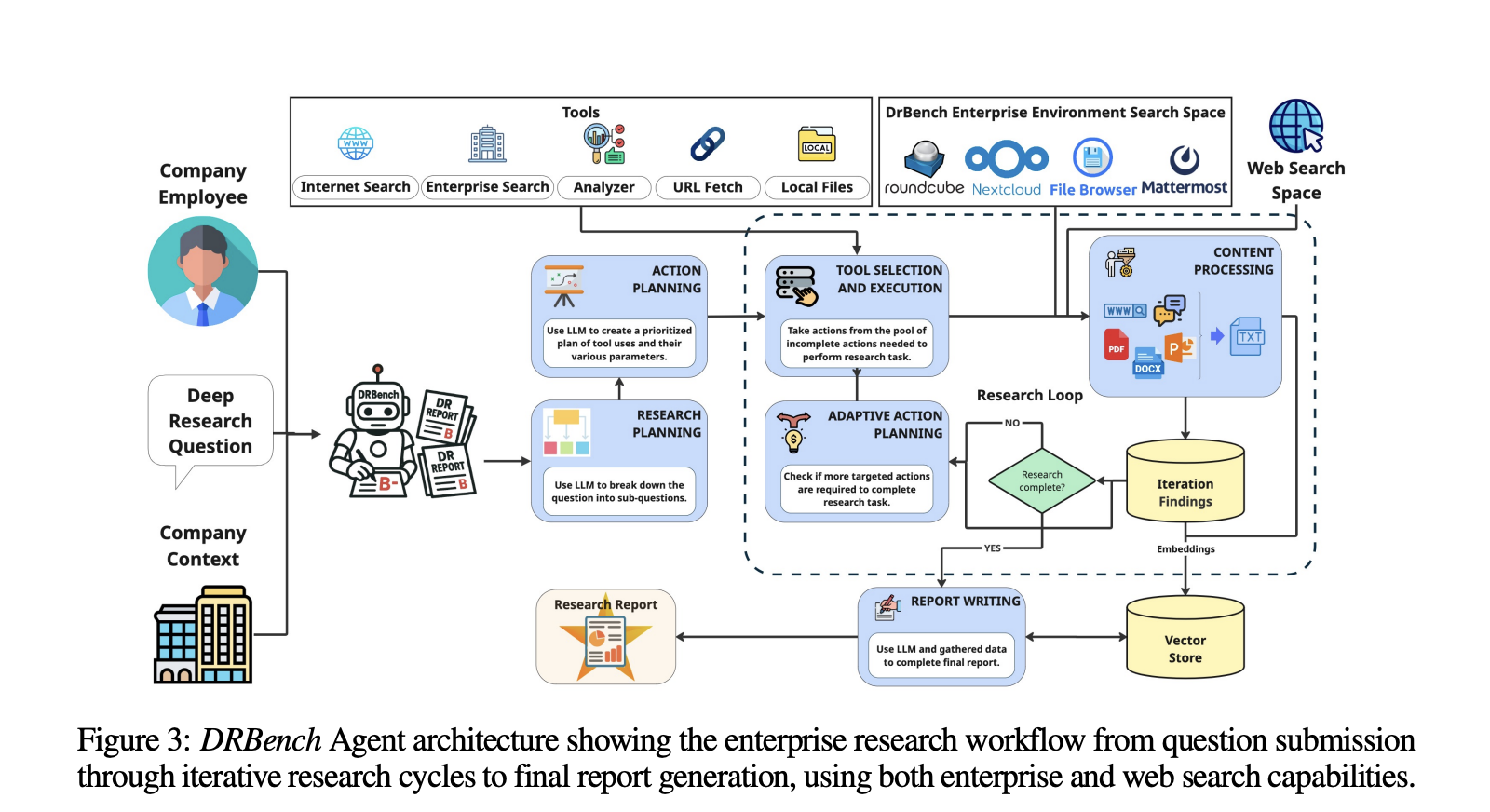

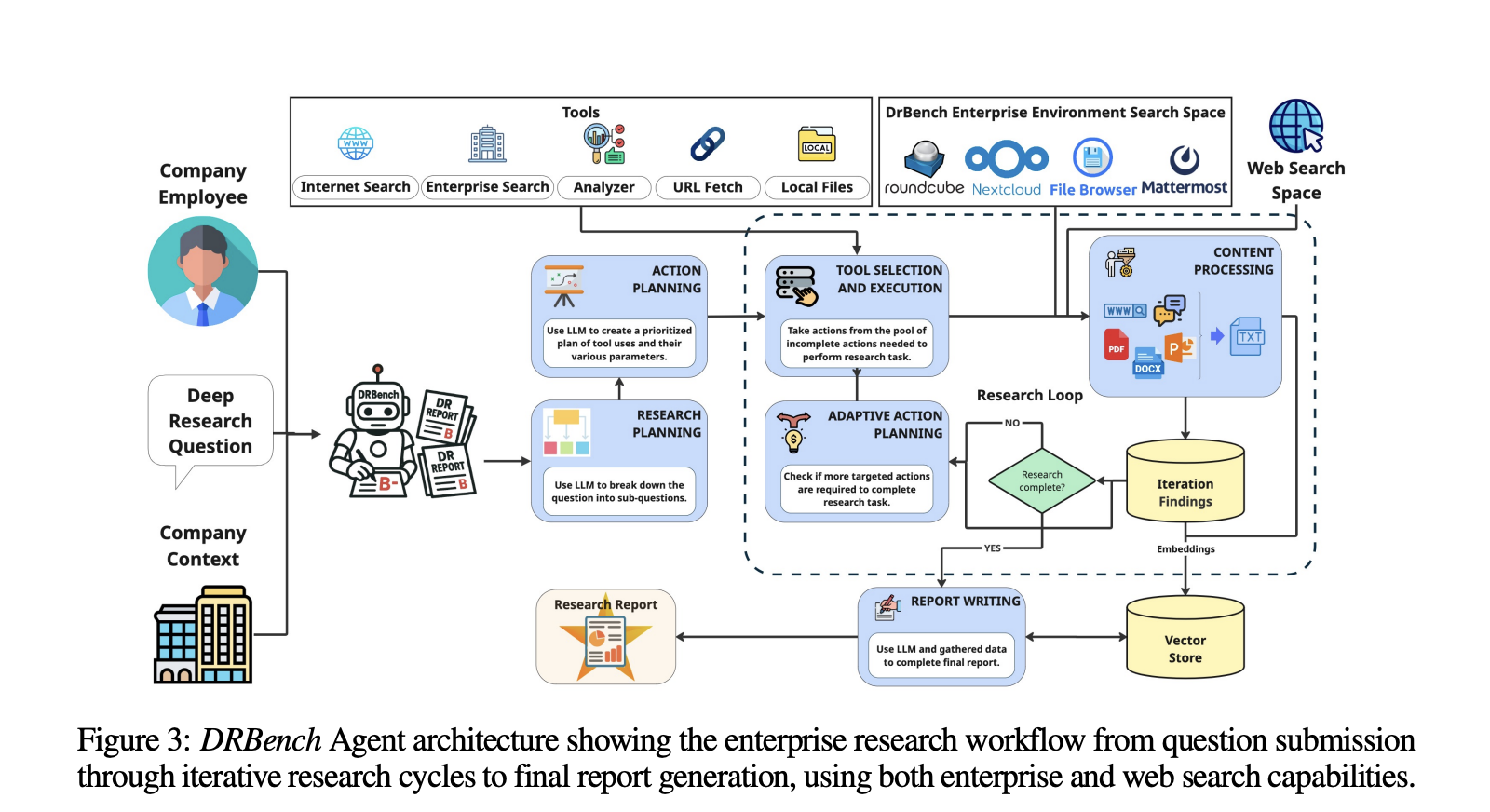

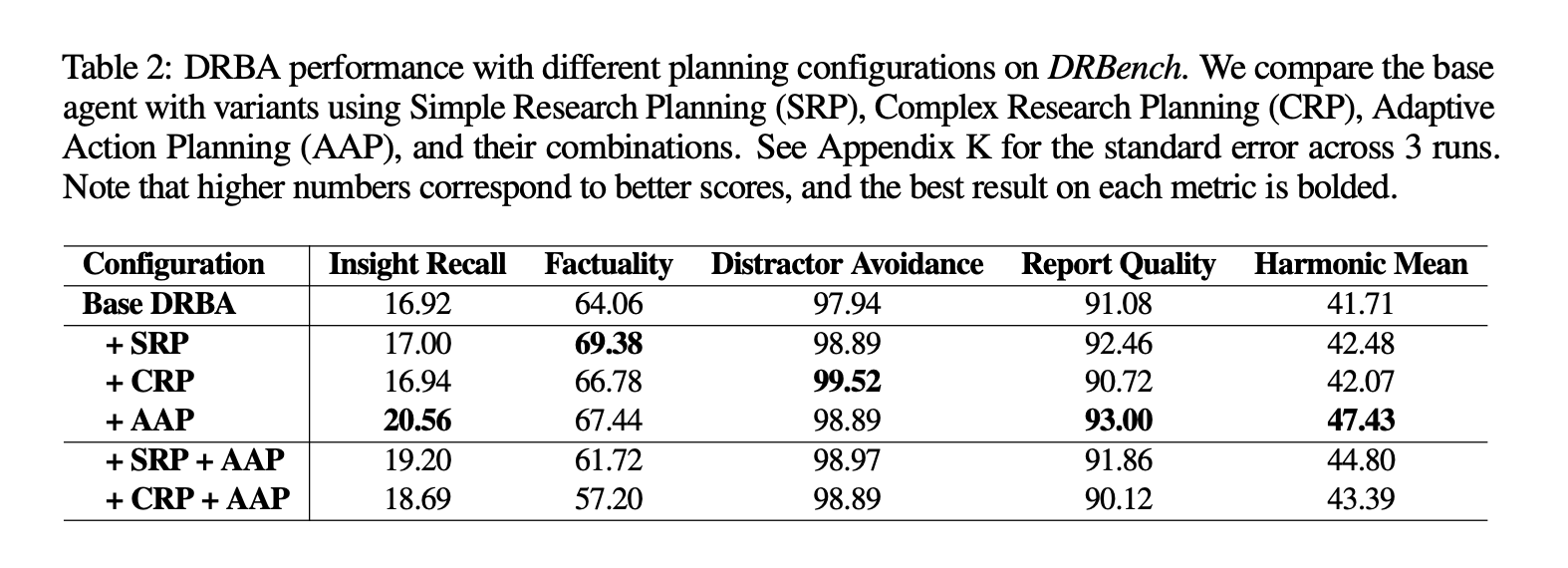

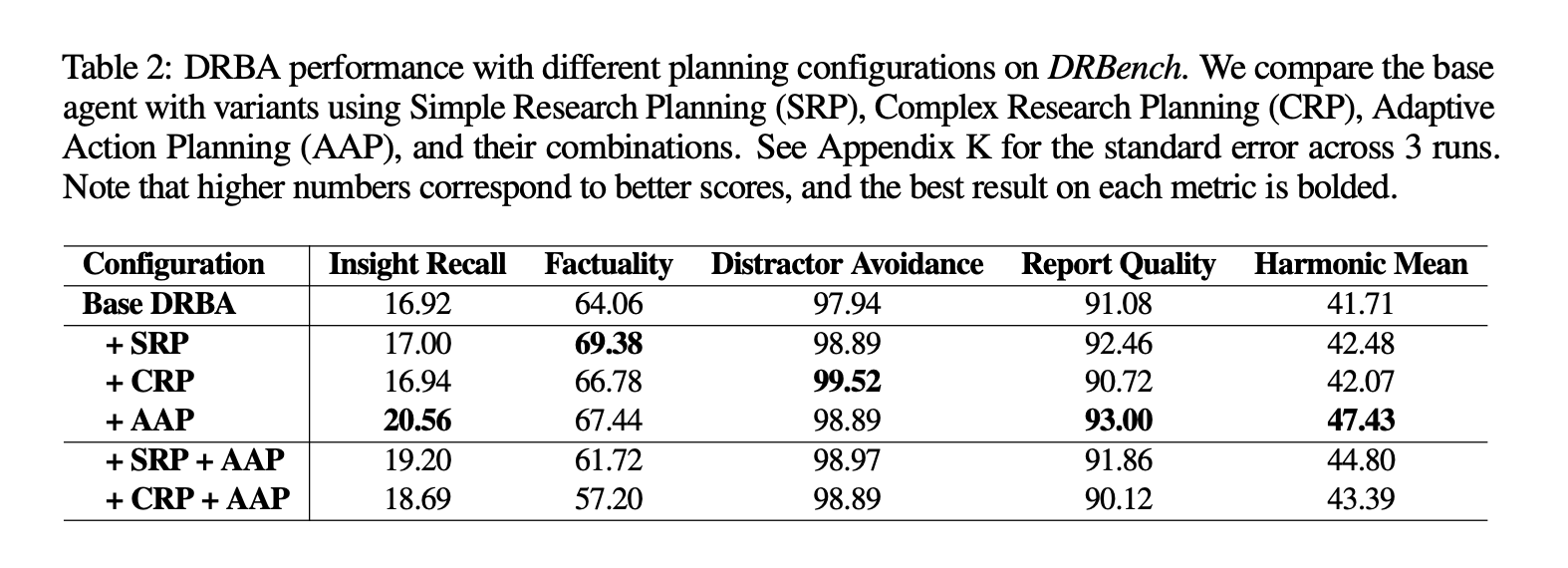

Baseline agent and analysis loop

The analysis group introduces a task-oriented baseline, DRBench Agent (DRBA), designed to function natively contained in the DRBench surroundings. DRBA is organized into 4 elements: analysis planning, motion planning, a analysis loop with Adaptive Motion Planning (AAP), and report writing. Planning helps two modes: Advanced Analysis Planning (CRP), which specifies investigation areas, anticipated sources, and success standards; and Easy Analysis Planning (SRP), which produces light-weight sub-queries. The analysis loop iteratively selects instruments, processes content material (together with storage in a vector retailer), identifies gaps, and continues till completion or a max-iteration funds; the report author synthesizes findings with quotation monitoring.

Why that is essential for enterprise brokers?

Most “deep analysis” brokers look compelling on public-web query units, however manufacturing utilization hinges on reliably discovering the correct inner needles, ignoring believable inner distractors, and citing each private and non-private sources underneath enterprise constraints (login, permissions, UI friction). DRBench’s design immediately targets this hole by: (1) grounding duties in sensible firm/persona contexts; (2) distributing proof throughout a number of enterprise apps plus the net; and (3) scoring whether or not the agent really extracted the meant insights and wrote a coherent, factual report. This mixture makes it a sensible benchmark for system builders who want end-to-end analysis reasonably than single-tool micro-scores.

Key Takeaways

- DRBench evaluates deep analysis brokers on complicated, open-ended enterprise duties that require combining public net and personal firm knowledge.

- The preliminary launch covers 15 duties throughout 10 domains, every grounded in sensible consumer personas and organizational context.

- Duties span heterogeneous enterprise artifacts—productiveness software program, cloud file methods, emails, chat—plus the open net, going past web-only setups.

- Experiences are scored for perception recall, factual accuracy, and coherent, well-structured reporting utilizing rubric-based analysis.

- Code and benchmark belongings are open-sourced on GitHub for reproducible analysis and extension.

From an enterprise analysis standpoint, DRBench is a helpful step towards standardized, end-to-end testing of “deep analysis” brokers: the duties are open-ended, grounded in sensible personas, and require integrating proof from the public net and a non-public firm data base, then producing a coherent, well-structured report—exactly the workflow most manufacturing groups care about. The discharge additionally clarifies what’s being measured—recall of related insights, factual accuracy, and report high quality—whereas explicitly shifting past web-only setups that overfit to looking heuristics. The 15 duties throughout 10 domains are modest in scale however ample to show system bottlenecks (retrieval throughout heterogeneous artifacts, quotation self-discipline, and planning loops).

Try the Paper and GitHub web page. Be at liberty to take a look at our GitHub Web page for Tutorials, Codes and Notebooks. Additionally, be at liberty to observe us on Twitter and don’t overlook to affix our 100k+ ML SubReddit and Subscribe to our Publication. Wait! are you on telegram? now you possibly can be a part of us on telegram as effectively.

Asif Razzaq is the CEO of Marktechpost Media Inc.. As a visionary entrepreneur and engineer, Asif is dedicated to harnessing the potential of Synthetic Intelligence for social good. His most up-to-date endeavor is the launch of an Synthetic Intelligence Media Platform, Marktechpost, which stands out for its in-depth protection of machine studying and deep studying information that’s each technically sound and simply comprehensible by a large viewers. The platform boasts of over 2 million month-to-month views, illustrating its recognition amongst audiences.