Introduction

During the last couple of years, developments in Synthetic Intelligence (AI) have pushed an exponential improve within the demand for GPU sources and electrical vitality, resulting in a world shortage of high-performance GPUs, akin to NVIDIA’s flagship chipsets. This shortage has created a aggressive and dear panorama. Organizations with the monetary capability to construct their very own AI infrastructure pay substantial premiums to keep up operations, whereas others depend on renting GPU sources from cloud suppliers, which comes with equally prohibitive and escalating prices. These infrastructures typically function below a “one-size-fits-all” mannequin, through which organizations are compelled to pay for AI-supporting sources that stay underutilized throughout prolonged intervals of low demand, leading to pointless expenditures.

The monetary and logistical challenges of sustaining such infrastructure are higher illustrated by examples like OpenAI, which, regardless of having roughly 10 million paying subscribers for its ChatGPT service, reportedly incurs vital every day losses because of the overwhelming operational bills attributed to the tens of hundreds of GPUs and vitality used to help AI operations. This raises important issues in regards to the long-term sustainability of AI, notably as demand and prices for GPUs and vitality proceed to rise.

Such prices could be considerably decreased by creating efficient mechanisms that may dynamically uncover and allocate GPUs in a semi-decentralized trend that caters to the particular necessities of particular person AI operations. Trendy GPU allocation options should adapt to the various nature of AI workloads and supply personalized useful resource provisioning to keep away from pointless idle states. In addition they want to include environment friendly mechanisms for figuring out optimum GPU sources, particularly when sources are constrained. This may be difficult as GPU allocation methods should accommodate the altering computational wants, priorities, and constraints of various AI duties and implement light-weight and environment friendly strategies to allow fast and efficient useful resource allocation with out resorting to exhaustive searches.

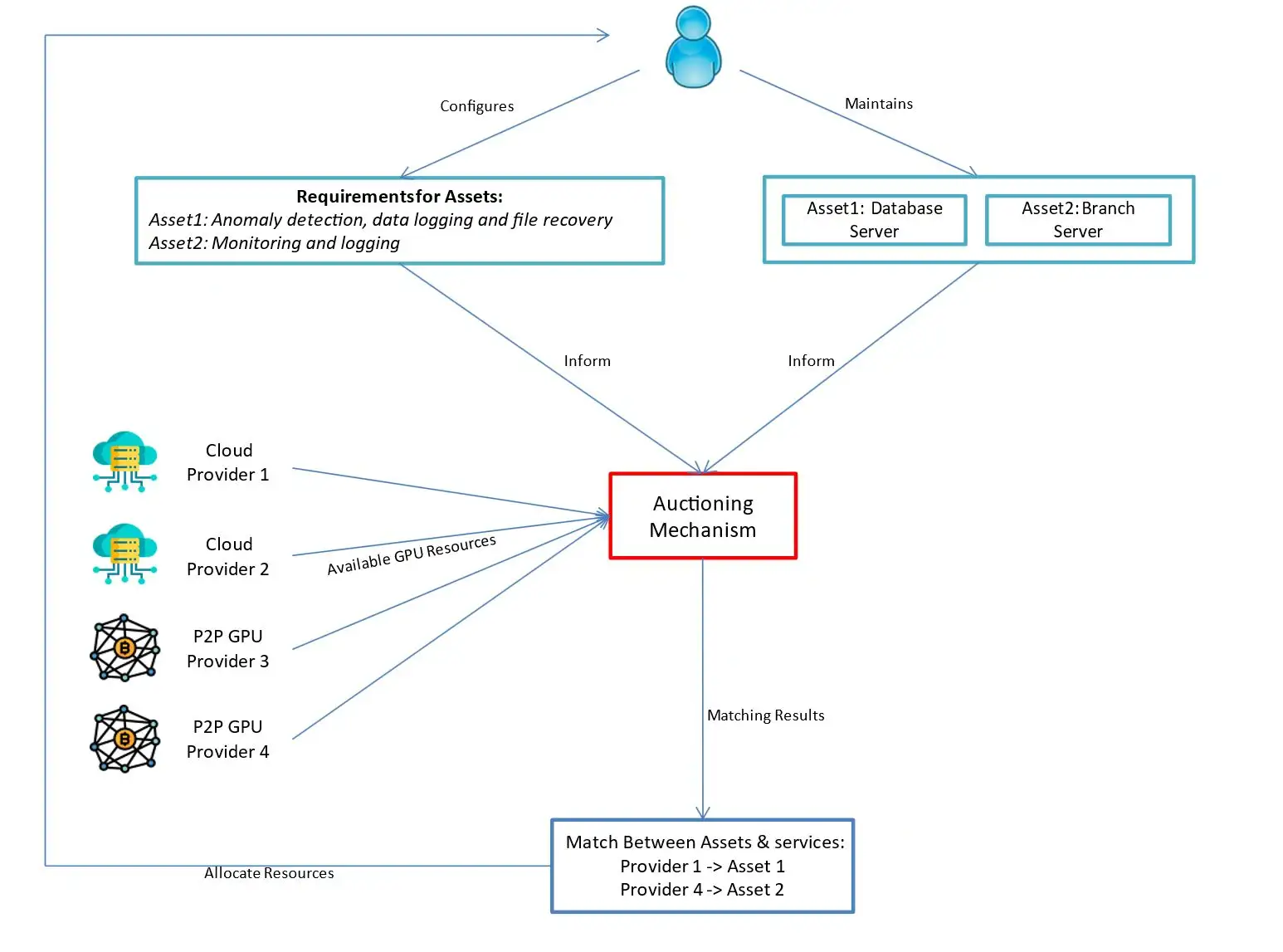

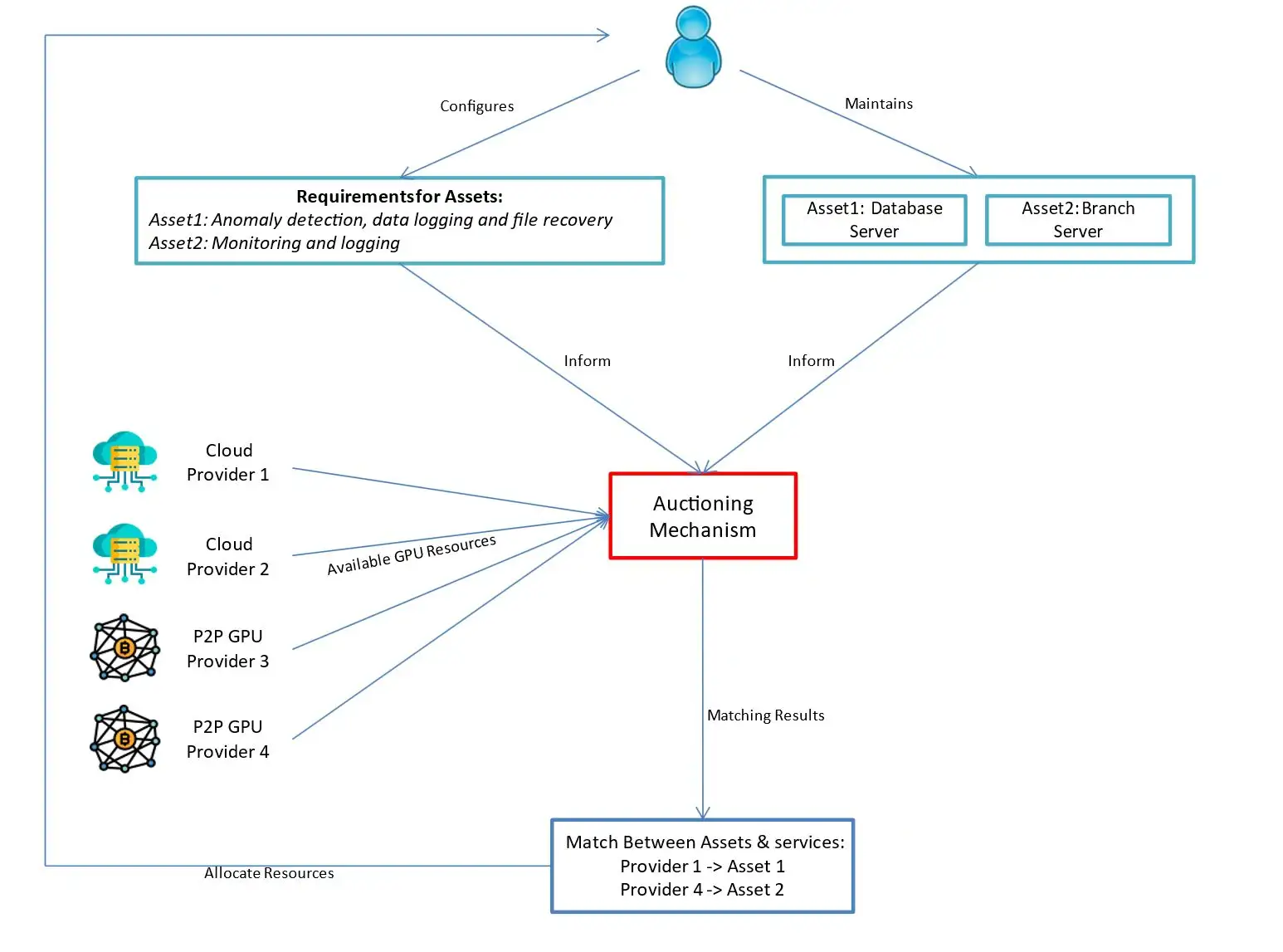

On this paper, we suggest a self-adaptive GPU allocation framework that dynamically manages the computational wants of AI workloads of various property / methods by combining a decentralized agent-based public sale mechanism (e.g. English and Posted-offer auctions) with supervised studying strategies akin to Random Forest.

The public sale mechanism addresses the size and complexity of GPU allocation whereas balancing trade-offs between competing useful resource requests in a distributed and environment friendly method. The selection of public sale mechanism could be tailor-made primarily based on the working setting in addition to the variety of suppliers and customers (bidders) to make sure effectiveness. To additional optimize the method, blockchain know-how is integrated into the public sale mechanism. Utilizing blockchain ensures safe, clear, and decentralized useful resource allocation and a broader attain for GPU sources. Peer-to-peer blockchain initiatives (e.g., Render, Akash, Spheron, Gpu.web) that make the most of idle GPU sources exist already and are extensively used.

In the meantime, the supervised studying part, particularly the Random Forest classification algorithm, allows proactive and automatic decision-making by detecting runtime anomalies and optimizing useful resource allocation methods primarily based on historic knowledge. By leveraging the Random Forest classifier, our framework identifies environment friendly allocation plans knowledgeable by previous efficiency, avoiding exhaustive searches and enabling tailor-made GPU provisioning for AI workloads.

The Use of Market within the GPU Allocation Framework

Providers and GPU sources can adapt to the altering computational wants of AI workloads in dynamic and shared environments. AI duties could be optimized by choosing acceptable GPU sources that finest meet their evolving necessities and constraints. The connection between GPU sources and AI providers is important (Determine 1), because it captures not solely the computational overhead imposed by AI duties but additionally the effectivity and scalability of the options they supply. A unified mannequin could be utilized: every AI workload aim (e.g., coaching giant language fashions) could be damaged down into sub-goals, akin to lowering latency, optimizing vitality effectivity, or making certain excessive throughput. These sub-goals can then be matched with GPU sources most fitted to help the general AI goal.

Given the multi-tenant and shared nature of Cloud-based and blockchain enabled AI infrastructure, together with the excessive demand in GPUs, any allocation answer should be designed with scalable structure. Market-inspired methodologies current a promising answer to this drawback, providing an efficient optimization mechanism for repeatedly satisfying the various computational necessities of a number of AI duties. These market-based options empower each customers and suppliers to independently make choices that maximize their use, whereas regulating the availability and demand of GPU sources, attaining equilibrium. In eventualities with restricted GPU availability, public sale mechanisms can facilitate efficient allocation by prioritizing useful resource requests primarily based on urgency (mirrored in bidding costs), making certain that high-priority AI duties obtain the required sources.

Market fashions together with blockchain additionally deliver transparency to the allocation course of by establishing systematic procedures for buying and selling and mapping GPU sources to AI workloads and sub-goals. Lastly, the adoption of market ideas could be seamlessly built-in by AI service suppliers, working both on Cloud or blockchain, lowering the necessity for structural adjustments and minimizing the chance of disruptions to AI workflows.

Framework Overview (Utilizing an Instance)

Given our experience in cybersecurity, we discover a GPU allocation situation for a forensic AI system designed to help incident response throughout a cyberattack. “Firm Z” (fictitious), a multinational monetary providers agency working in 20 international locations, manages a distributed IT infrastructure with extremely delicate knowledge, making it a chief goal for risk actors. To boost its safety posture, Firm Z deploys a forensic AI system that leverages GPU acceleration to quickly analyze and reply to incidents.

This AI-driven system consists of autonomous brokers embedded throughout the corporate’s infrastructure, repeatedly monitoring runtime safety necessities by specialised sensors. When a cyber incident happens, these brokers dynamically alter safety operations, leveraging GPUs and different computational sources to course of threats in actual time. Nevertheless, outdoors of emergencies, the AI system primarily features in a coaching and reinforcement studying capability, making a devoted AI infrastructure each pricey and inefficient. As a substitute, Firm Z adopts an on-demand GPU allocation mannequin, making certain high-performance, AI-driven, forensic evaluation whereas minimizing pointless useful resource waste. For the needs of this instance, we function below the next assumptions:

Incident Overview

Firm Z is below a ransomware assault affecting its inside databases and consumer information. The assault disrupts regular operations and threatens to leak and encrypt delicate knowledge. The forensic AI system wants to research the assault in actual time, establish its root-cause, assess its influence, and advocate mitigation steps. The forensic AI system requires GPUs for computationally intensive duties, together with the evaluation of assault patterns in varied log information, evaluation of encrypted knowledge and help with steerage on restoration actions. The AI system depends on cloud-based and peer-to-peer blockchain GPU sources suppliers, which supply high-performance GPU cases for duties akin to deep studying model-based inference, knowledge mining, and anomaly detection (Determine 2).

Dynamic Asset Wants

We take an asset centric strategy to safety to make sure we tailor GPU utilization per system and cater to its actual wants, as an alternative of selling a one-solution-fits-all that may be extra pricey. On this situation the property thought of embrace Firm Z’s servers affected by the ransomware assault that want fast forensic evaluation. Every asset has a set of AI-related computational necessities primarily based on the urgency of the response, sensitivity of the information, and severity of the assault. For instance:

- The main database server shops buyer monetary knowledge and requires intensive GPU sources for anomaly detection, knowledge logging and file restoration operations.

- A department server, used for operational functions, has decrease urgency and requires minimal GPU sources for routine monitoring and logging duties.

Preliminary Circumstances

The forensic AI system begins by analyzing the ransomware’s root trigger and lateral motion patterns. Firm Z’s main database server is assessed as a important asset with excessive computational calls for, whereas the department server is categorized as a medium-priority asset. The GPUs initially allotted are ample to carry out these duties. Nevertheless, because the assault progresses, the ransomware begins to focus on encrypted backups. That is detected by the deployed brokers which set off a re-prioritization of useful resource allocation.

Adaptation and Determination Making

The forensic AI system makes use of a Random Forest classifier to research the altering situations captured by agent sensors in real-time. It evaluates a number of elements:

- The urgency of duties (e.g., whether or not the ransomware is actively encrypting extra information).

- The sensitivity of the information (e.g., buyer monetary information vs. operational logs).

- Historic patterns of comparable assaults and the related GPU necessities.

- Historic evaluation of incident responder actions on ransomware circumstances and their related responses.

Primarily based on these inputs, the system dynamically determines new useful resource allocation priorities. As an illustration, it might resolve to allocate extra GPUs to the first database server to expedite anomaly detection, system containment and knowledge restoration whereas lowering the sources assigned to the department server.

Market-Impressed GPU Allocation

Given the shortage of GPUs, the system leverages a decentralized agent-based public sale mechanism to amass extra sources from Cloud and peer-to-peer blockchain suppliers. Every agent submits a bidding value per asset, reflecting its computational urgency. The first database server submits a excessive bid because of its important nature, whereas the department server submits a decrease bid. These bids are knowledgeable by historic knowledge, making certain environment friendly use of obtainable sources. The GPU suppliers reply with a variation of the Posted Provide public sale. On this mannequin, suppliers set GPU costs and the variety of obtainable cases for a particular time. Belongings with the very best bids (indicating essentially the most pressing wants) are prioritized for GPU allocation, towards the bids of different customers and their property in want of GPU sources.

As such, the first database server efficiently acquires extra GPUs because of its increased bidding value, prioritizing file restoration suggestions and anomaly detection, over the department server, with its decrease bid, reflecting a low precedence activity that’s queued to attend for obtainable GPU sources.

Evolving Necessities

Because the ransomware assault additional spreads, the sensors detect this exercise. Primarily based on historic patterns of comparable assaults and their related GPU necessities a brand new high-priority activity for analyzing and defending encrypted backups to stop knowledge loss has been created. This activity introduces a brand new computational requirement, prompting the system to submit one other bid for GPUs. The Random Forest algorithm identifies this activity as important and assigns the next bidding value primarily based on the sensitivity of the impacted knowledge. The public sale mechanism ensures that GPUs are dynamically allotted to this activity, sustaining a stability between value and urgency. By means of this adaptive course of, the forensic AI system efficiently prioritizes GPU sources for essentially the most important duties. Guaranteeing that Firm Z can rapidly mitigate the ransomware assault and information incident responders and safety analysts in recovering delicate knowledge and restoring operations.

Safety Concerns

Outsourcing GPU computation introduces dangers associated to knowledge confidentiality, integrity, and availability. Delicate knowledge transmitted to exterior suppliers could also be uncovered to unauthorized entry, both by insider threats, misconfigurations, or side-channel assaults.

Moreover, malicious actors might manipulate computational outcomes, inject false knowledge, or intervene with useful resource allocation by inflating bids. Availability dangers additionally come up if an attacker outbids important property, delaying important processes like anomaly detection or file restoration. Regulatory issues additional complicate outsourcing, as knowledge residency and compliance legal guidelines (e.g., GDPR, HIPAA) might prohibit the place and the way knowledge is processed.

To mitigate these dangers, the place efficiency permits, we leverage encryption strategies akin to homomorphic encryption to allow computations on encrypted knowledge with out exposing uncooked data. Trusted Execution Environments (TEEs) like Intel SGX present safe enclaves that guarantee computations stay confidential and tamper-proof. For integrity, zero-knowledge proofs (ZKPs) permit verification of right computation with out revealing delicate particulars. In circumstances the place giant quantities of knowledge must be processed, differential privateness strategies can be utilized to hide particular person knowledge factors in datasets by including managed random noise. Moreover, blockchain-based good contracts can improve public sale transparency, stopping value manipulation and unfair useful resource allocation.

From an operational perspective, implementing a multi-cloud or hybrid technique reduces dependency on a single supplier, enhancing availability and redundancy. Robust entry controls and monitoring assist detect unauthorized entry or tampering makes an attempt in real-time. Lastly, implementing strict service-level agreements (SLAs) with GPU suppliers ensures accountability for efficiency, safety, and regulatory compliance. By combining these mitigations, organizations can securely leverage exterior GPU sources whereas minimizing potential threats.

Conceptual Market-based Structure

This part supplies a high-level evaluation of the entities and operation phases of the proposed framework.

Brokers

Brokers are autonomous entities that symbolize customers within the “GPU market”. An agent is liable for utilizing their sensors to observe adjustments within the run-time AI objectives and sub-goals of property and set off adaptation for sources. By sustaining knowledge information for every AI operation, it’s possible to assemble coaching datasets to tell the Random Forest algorithm to copy such conduct and allocate GPUs in an automatic method. To adapt, the Random Forest algorithm examines the recorded historic knowledge of a consumer and its property to find correlations between earlier AI operations (together with their related GPU utilization) and the prevailing state of affairs. The outcomes from the Random Forest algorithm are then used to assemble a specification, known as a bid, which displays the precise AI wants and supporting GPU sources. The bid consists of the totally different attributes which can be depending on the issue area. As soon as a bid is fashioned, it’s forwarded to the coordinator (auctioneer) for auctioning.

GPU Useful resource Suppliers (GRP)

Cloud service and peer-to-peer GPU suppliers are distributors that commerce their GPU sources out there. They’re liable for publicly asserting their gives (known as asks) to the coordinator. The asks comprise a specification of the traded sources together with the value that they need to promote them at. In case of a match between an ask and a bid, the GRP allocates the required GPU sources to the profitable agent to help their AI operations. Thus, every consumer has entry to totally different configurations of GPU sources which may be supplied by totally different GRPs.

Coordinator

The coordinator is a centralized software program system that features as each an auctioneer and a market regulator, facilitating the allocation of GPU sources. Positioned between brokers and GPU useful resource suppliers (GRPs), it manages buying and selling rounds by amassing and matching bids from brokers with supplier gives. As soon as the public sale course of is finalized, the coordinator not interacts straight with customers and suppliers. Nevertheless, it continues to supervise compliance with Service Degree Agreements (SLAs) and ensures that allotted sources are correctly assigned to customers as agreed.

System Operation Phases

The proposed framework consists of 4 (4) phases working in a steady cycle. Beginning with monitoring that passes all related knowledge for evaluation informing the variation course of, which in flip triggers suggestions (allocation of required sources) assembly the altering AI operational necessities. As soon as a set of AI operational necessities are met, the monitoring section begins once more to detect new adjustments. The operational phases are as comply with:

Monitor Section

Sensors function on the agent facet to detect adjustments in safety. The kind of knowledge collected varies relying on the particular drawback being addressed (safety or in any other case). For instance, within the case of AI-driven risk detection, related adjustments impacting safety would possibly embrace:

Behavioral indicators:

- Course of Execution Patterns: Monitoring sudden or suspicious processes (e.g., execution of PowerShell scripts, uncommon system calls).

- Community Visitors Anomalies: Detecting irregular spikes in knowledge switch, communication with identified malicious IPs, or unauthorized protocol utilization.

- File Entry and Modification Patterns: Figuring out unauthorized file encryption (potential ransomware), uncommon deletions, or repeated failed entry makes an attempt.

- Consumer Exercise Deviations: Analyzing deviations in system utilization patterns, akin to extreme privilege escalations, speedy knowledge exfiltration, or irregular working hours.

Content material-based risk indicators:

- Malicious File Signatures: Scanning for identified malware hashes, embedded exploits, or suspicious scripts in paperwork, emails, or downloads.

- Code and Reminiscence Evaluation: Detecting obfuscated code execution, course of injection, or suspicious reminiscence manipulations (e.g., Reflective DLL Injection, shellcode execution).

- Log File Anomalies: Figuring out irregularities in system logs, akin to log deletion, occasion suppression, or manipulation makes an attempt.

Anomaly-based detection:

- Uncommon Privilege Escalations: Monitoring sudden admin entry, unauthorized privilege elevation, or lateral motion throughout methods.

- Useful resource Consumption Spikes: Monitoring unexplained excessive CPU/GPU utilization, probably indicating cryptojacking or denial-of-service (DoS) assaults.

- Information Exfiltration Patterns: Detecting giant outbound knowledge transfers, uncommon knowledge compression, or encrypted payloads despatched to exterior servers.

Risk intelligence and correlation:

- Risk Feed Integration: Matching noticed community conduct with real-time risk intelligence sources for identified indicators of compromise (IoCs).

The information collected by the sensors is then fed right into a watchdog course of, which repeatedly displays for any adjustments that would influence AI operations. This watchdog identifies shifts in safety situations or system conduct that will affect how GPU sources are allotted and consumed. As an illustration, if an AI agent detects an uncommon login try from a high-risk location, it might require extra GPU sources to carry out extra intensive risk evaluation and advocate acceptable actions for enhanced safety.

Evaluation Section

Through the evaluation section the information recorded from the sensors are examined to find out if the prevailing GPU sources can fulfill the runtime AI operational objectives and sub-goals of an asset. In case the place they’re deemed inadequate adaptation is triggered. We undertake a goal-oriented strategy to map safety objectives to their sub-goals. Important adjustments to the dynamics of a number of interrelated sub-goals can set off the necessity for adaptation. As adaptation is pricey, the frequency of adaptation could be decided by contemplating the extent to which the safety objectives and sub-goals diverge from the tolerance degree.

Adaptation Section

Adaptation entails bid formulation by brokers, ask formulation by GPU suppliers, and the auctioning course of to find out optimum matches. It additionally contains the allocation of GPU sources to customers. The difference course of operates as follows.

Bid Formulation

Adaptation initiates with the creation of a bid that requests the invention, choice and allocation of GPU sources from totally different GRPs out there. The bid is constructed with the help of the Random Forest algorithm which identifies the optimum plan of action for adaptation primarily based on beforehand encountered AI operations and their GPU utilization. Using ensemble classifiers, akin to Random Forest, permits for mitigating bias and knowledge overfitting because of their excessive variance. The constructed bids encompass the next attributes: i) the asset linked with AI operations; ii) the criticality of the operations; iii) the sub-goals that require help; iv) an approximate quantity of GPU sources that can be utilized and v) the very best value {that a} consumer is keen to pay (could be calculated by taking the common worth of all related historic bids).

To find out how the selection of an public sale can have an effect on the price of an answer for customers, the proposed framework considers two dominant market mechanisms, particularly the English public sale and a variant of the Posted-offer public sale mannequin. Consequently, we use two totally different strategies to calculate the bidding costs when forming bids. Our modified Posted Provide public sale mannequin is based on a take-it-or-leave-it foundation. On this mannequin, the GRPs publicly announce the buying and selling sources together with their related prices for a sure buying and selling interval. Through the buying and selling interval, brokers are chosen (one by one) in descending order primarily based on their bidding costs (as an alternative of being chosen randomly) and allowed to just accept or decline GRP gives. By introducing consumer bidding costs within the Posted Provide mannequin, it’s attainable for the self-adaptive system to find out if a consumer can afford to pay a vendor’s requested value, therefore automating the choice course of. In addition to utilizing bidding costs as a heuristic for rating / choosing customers primarily based on the criticality of their requests. The auctioning spherical continues till all patrons have acquired service, or till all provided GPU sources have been allotted. Brokers decide their bidding costs in Posted Provide by calculating the common worth of all historic bidding costs with related nature and criticality after which improve or lower that worth by a share “p”. The calculated bidding value is the very best value {that a} consumer is keen to bid on in an public sale. As soon as the bidding value is calculated, the agent provides the value together with the opposite required attributes in a bid.

Equally, the English public sale process follows related steps to the Posted Provide mannequin to calculate bidding costs. Within the English public sale mannequin, the bidding value initiates at a low value (established by the GRPs) after which raises incrementally, akin to progressively increased bids are solicited till the public sale is closed, or no increased bids are acquired. Due to this fact, every agent calculates its highest bidding value by contemplating the closing costs of accomplished auctions, in distinction to the fastened bidding costs used within the Posted Provide mannequin.

Ask Formulation

GRPs on their facet type their gives / asks which they ahead to the coordinator for auctioning. GRPs decide the value of their GPU sources primarily based on the historic knowledge of submitted asks. A possible solution to calculate the promoting value is to take the common worth of beforehand submitted ask costs after which subtract or add a share “p” on that worth, relying on the revenue margin a GRP needs to make. As soon as the promoting value is calculated, the brokers encapsulate the value together with a specification of the provided sources in an ask. Upon creation of the bid, it’s forwarded to the public sale coordinator.

Auctioning

As soon as bids and asks are acquired, the coordinator enters them in an public sale to find GPU sources that may finest fulfill the AI operational objectives and sub-goals of various property and customers, whereas catering for optimum prices. Relying on the tactic chosen for calculating the bid and ask costs (i.e., Posted Provide or English public sale), there may be an identical process for auctioning.

Within the case the place the Posted Provide methodology is employed, the coordinator discovers GRPs that may help the runtime AI objectives and sub-goals of an asset / consumer by evaluating the useful resource specification in an ask with the bid specification. Specifically, the coordinator compares the: quantity of GPU sources and value to find out the suitability of a service for an agent. Within the case the place an ask violates any of the desired necessities and constraints (e.g., a service gives insufficient computational sources) of an asset, the ask is eradicated. Upon elimination of all unsuitable asks, the coordinator types brokers in a descending value order to rank them primarily based on the criticality of their bids / requests. Following, the auctioneer selects brokers (one by one) ranging from the highest of the checklist to permit them to buy the wanted sources till all brokers are served or till all obtainable models are offered.

Within the event the place the English public sale is used, the coordinator discovers all on-going auctions that fulfill the: computational necessities and bidding value and units a bid on behalf of the agent. The bidding value displays the present highest value in an public sale plus a bid increment worth “p”. The bid increment value is the minimal quantity by which an agent’s bid could be raised to grow to be the very best bidder. The bid increment worth could be decided primarily based on the very best bid in an public sale. These values are case particular, and they are often altered by brokers in line with their runtime wants and the market costs. Within the event the place a rival agent tries to outbid the profitable agent, the out-bid agent routinely will increase its biding value to stay the very best bidder, while making certain that the very best value laid out in its bid just isn’t violated. The profitable public sale, through which a match happens, is the one through which an agent has set a bid and, upon completion of the public sale spherical, has remained the very best bidder. If a match happens and the agent has set a bid to multiple ongoing public sale that trades related providers/sources, these bids are discarded. Submitting a number of bids to multiple public sale that trades related sources is permitted to extend the probability of a match occurring.

Suggestions Section

As soon as a match happens, the suggestions section is initiated, throughout which the coordinator notifies the profitable GRP and agent to start the commerce. The agent is requested to ahead the fee for the received sources to the GRP. The transaction is recorded by the coordinator to make sure that no get together will lie in regards to the validity of the fee and allocation. Within the case the place the auctioning was carried out primarily based on the English public sale, the agent must pay the value of the second highest bid plus an outlined bid increment, whereas if the Posted Provide public sale was used the fastened value set by a GRP is paid. As soon as fee is acquired, the Service Supplier releases the requested sources. Useful resource allocation could be carried out in two methods, relying on the GRP: both by a cloud container offering entry to all GPU sources inside the setting, or by making a community drive that allows a direct, native interface to the consumer’s system. The coordinator is paid for its auctioning providers by including a small fee charge for each profitable match which is equally break up between the profitable agent and GRP.

We’d love to listen to what you suppose! Ask a query, remark under, and keep related with Cisco Safety on social media.

Cisco Safety Social Channels

Share: