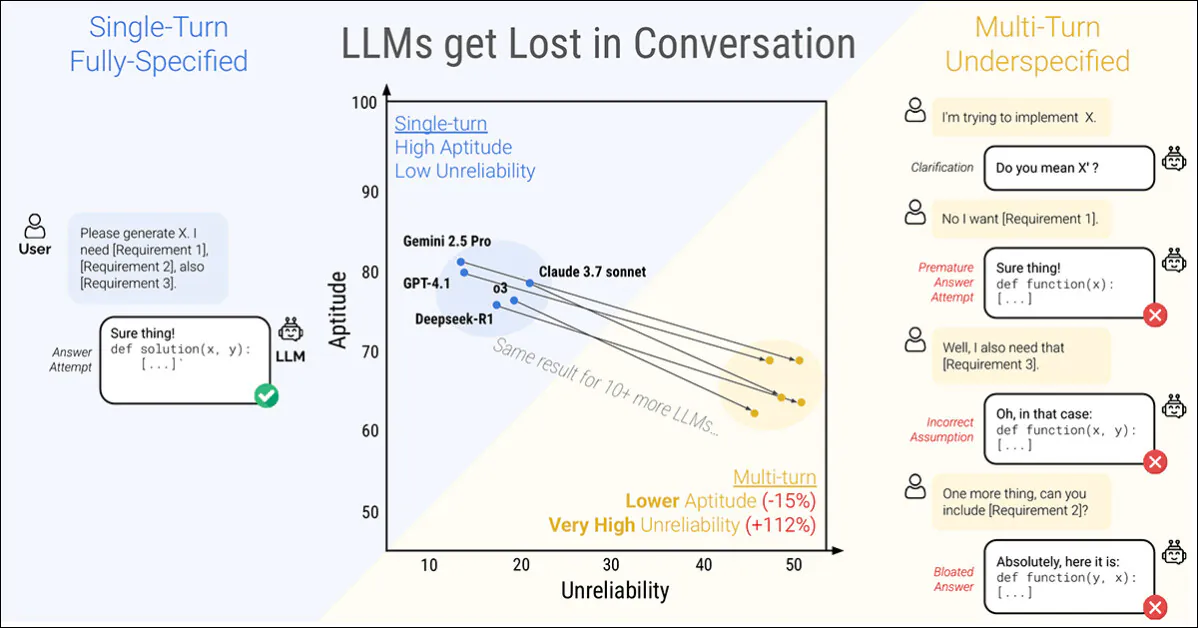

A brand new paper from Microsoft Analysis and Salesforce finds that even probably the most succesful Giant Language Fashions (LLMs) crumble when directions are given in levels reasonably than suddenly. The authors discovered that efficiency drops by a mean of 39 % throughout six duties when a immediate is break up over a number of turns:

A single flip dialog (left) obtains one of the best outcomes, however is unnatural for the end-user. A multi-turn dialog (proper) finds even the highest-ranked and most performant LLMs shedding the efficient impetus in a dialog. Supply: https://arxiv.org/pdf/2505.06120

Extra strikingly, the reliability of responses takes a nosedive, with prestigious fashions resembling ChatGPT-4.1 and Gemini 2.5 Professional swinging between near-perfect solutions and manifest failures, relying on how the identical activity is phrased; additional, output consistency can drop by greater than half within the course of.

To discover this habits, the paper introduces a way known as sharding*, which splits fully-specified prompts into smaller fragments and releases them separately right into a dialog.

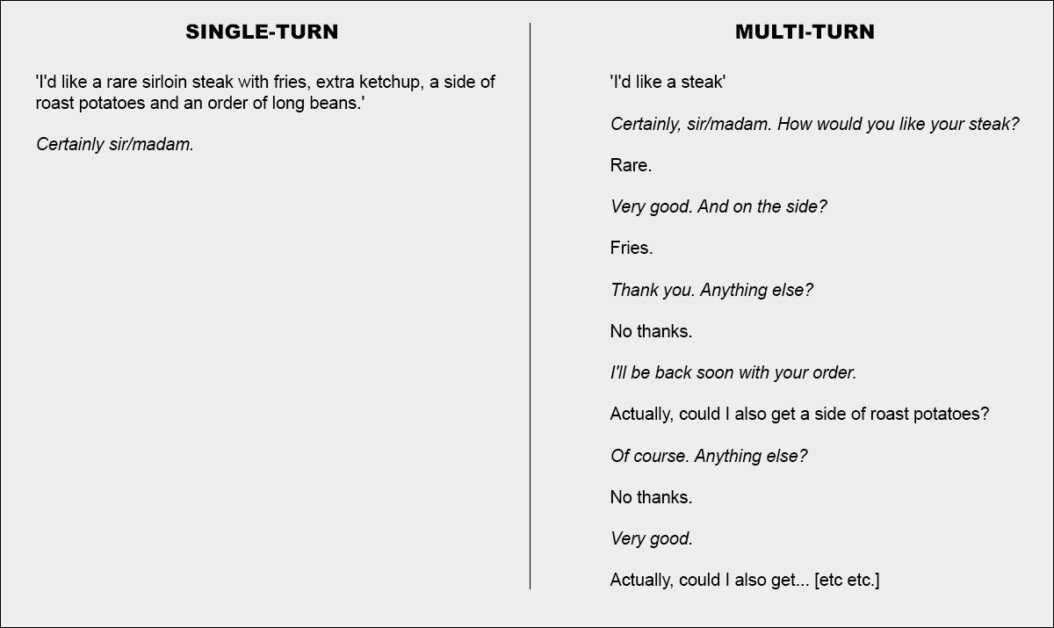

In probably the most fundamental phrases, that is equal to giving a cohesive and complete single order at a restaurant, leaving the waiter with nothing to do however acknowledge the request; or else deciding to assault the matter collaboratively:

Two excessive variations of a restaurant dialog (not from the brand new paper, for illustrative functions solely).

For emphasis, the instance above maybe places the shopper in a unfavourable mild. However the core thought depicted within the second column is that of a transactional alternate that clarifies a problem-set, previous to addressing the issues – apparently a rational and cheap method of approaching a activity.

This setup is mirrored within the new work’s drip-fed, sharded strategy to LLM interplay. The authors word that LLMs typically generate overly lengthy responses after which proceed to depend on their very own insights even after these insights have been proven to be incorrect, or irrelevant. This tendency, mixed with different elements, may cause the system to lose observe of the alternate completely.

The truth is, the researchers word what many people have discovered anecdotally – that one of the simplest ways to get the dialog again on observe is to begin a brand new dialog with the LLM.

‘If a dialog with an LLM didn’t result in anticipated outcomes, beginning a brand new dialog that repeats the identical info may yield considerably higher outcomes than persevering with an ongoing dialog.

‘It is because present LLMs can get misplaced within the dialog, and our experiments present that persisting in a dialog with the mannequin is ineffective. As well as, since LLMs generate textual content with randomness, a brand new dialog might result in improved outcomes.’

The authors acknowledge that agentic methods resembling Autogen or LangChain can doubtlessly enhance the outcomes by performing as interpretative layers between the end-user and the LLM, solely speaking with the LLM once they have gathered sufficient ‘sharded’ responses to coagulate right into a single cohesive question (which the end-user won’t be uncovered to).

Nevertheless, the authors contend {that a} separate abstraction layer shouldn’t be essential, or else be constructed straight into the supply LLM:

‘An argument might be made that multi-turn capabilities should not a essential characteristic of LLMs, as it may be offloaded to the agent framework. In different phrases, do we want native multi-turn help in LLMs when an agent framework can orchestrate interactions with customers and leverage LLMs solely as single-turn operators?…’

However having examined the proposition throughout their array of examples, they conclude:

‘[Relying] on an agent-like framework to course of info could be limiting, and we argue LLMs ought to natively help multi-turn interplay’

This fascinating new paper is titled LLMs Get Misplaced In Multi-Flip Dialog, and comes from 4 researchers throughout MS Analysis and Salesforce,

Fragmented Conversations

The brand new technique first breaks down standard single-turn directions into smaller shards, designed to be launched at key moments throughout an LLM interplay, a construction that displays the exploratory, back-and-forth type of engagement seen in methods resembling ChatGPT or Google Gemini.

Every authentic instruction is a single, self-contained immediate that delivers your entire activity in a single go, combining a high-level query, supporting context, and any related situations. The sharded model breaks this into a number of smaller components, with every shard including only one piece of knowledge:

Paired directions exhibiting (a) a whole immediate delivered in a single flip and (b) its sharded model used to simulate an underspecified, multi-turn interplay. Semantically, every model delivers the identical informational payload.

The primary shard all the time introduces the primary purpose of the duty, whereas the remainder present clarifying particulars. Collectively, they ship the identical content material as the unique immediate, however unfold out naturally over a number of turns within the dialog.

Every simulated dialog unfolds between three parts: the assistant, the mannequin underneath analysis; the consumer, a simulated agent with entry to the complete instruction in sharded kind; and the system, which invigilates and scores the alternate.

The dialog begins with the consumer revealing the primary shard and the assistant replying freely. The system then classifies that response into one in every of a number of classes, resembling a clarification request or a full reply try.

If the mannequin does try a solution, a separate element extracts simply the related span for analysis, ignoring any surrounding textual content. On every new flip, the consumer reveals one extra shard, prompting one other response. The alternate continues till both the mannequin will get the reply proper or there are not any shards left to disclose:

Diagram of a sharded dialog simulation, with the evaluated mannequin highlighted in purple.

Early assessments confirmed that fashions typically requested about info that hadn’t been shared but, so the authors dropped the thought of showing shards in a hard and fast order. As a substitute, a simulator was used to determine which shard to disclose subsequent, based mostly on how the dialog was going.

The consumer simulator, carried out utilizing GPT-4o-mini, was subsequently given full entry to each your entire instruction and the dialog historical past, tasked with deciding, at every flip, which shard to disclose subsequent, based mostly on how the alternate was unfolding.

The consumer simulator additionally rephrased every shard to take care of conversational movement, with out altering the which means. This allowed the simulation to mirror the ‘give-and-take’ of actual dialogue, whereas preserving management over the duty construction.

Earlier than the dialog begins, the assistant is given solely the essential info wanted to finish the duty, resembling a database schema or an API reference. It’s not advised that the directions can be damaged up, and it isn’t guided towards any particular method of dealing with the dialog. That is carried out on function: in real-world use, fashions are nearly by no means advised {that a} immediate can be incomplete or up to date over time, and leaving out this context helps the simulation mirror how the mannequin behaves in a extra reasonable context.

GPT-4o-mini was additionally used to determine how the mannequin’s replies must be categorised, and to tug out any last solutions from these replies. This helped the simulation keep versatile, however did introduce occasional errors: nevertheless, after checking a number of hundred conversations by hand, the authors discovered that fewer than 5 % had any issues, and fewer than two % confirmed a change in end result due to them, they usually thought-about this a low sufficient error price inside the parameters of the venture.

Simulation Situations

The authors used 5 forms of simulation to check mannequin habits underneath completely different situations, every a variation on how and when components of the instruction are revealed.

Within the Full setting, the mannequin receives your entire instruction in a single flip. This represents the usual benchmark format and serves because the efficiency baseline.

The Sharded setting breaks the instruction into a number of items and delivers them separately, simulating a extra reasonable, underspecified dialog. That is the primary setting used to check how nicely fashions deal with multi-turn enter.

Within the Concat setting, the shards are stitched again collectively as a single listing, preserving their wording however eradicating the turn-by-turn construction. This helps isolate the results of conversational fragmentation from rephrasing or content material loss.

The Recap setting runs like Sharded, however provides a last flip the place all earlier shards are restated earlier than the mannequin provides a last reply. This assessments whether or not a abstract immediate might help get better misplaced context.

Lastly, Snowball goes additional, by repeating all prior shards on each flip, preserving the complete instruction seen because the dialog unfolds – and providing a extra forgiving check of multi-turn means.

Simulation sorts based mostly on sharded directions. A completely-specified immediate is break up into smaller components, which may then be used to simulate both single-turn (Full, Concat) or multi-turn (Sharded, Recap, Snowball) conversations, relying on how rapidly the knowledge is revealed.

Duties and Metrics

Six technology duties had been chosen to cowl each programming and pure language domains: code technology prompts had been taken from HumanEval and LiveCodeBench; Textual content-to-SQL queries had been sourced from Spider; API calls had been constructed utilizing information from the Berkeley Operate Calling Leaderboard; elementary math issues had been offered by GSM8K; tabular captioning duties had been based mostly on ToTTo; and Multi-document summaries had been drawn from the Abstract of a Haystack dataset.

Mannequin efficiency was measured utilizing three core metrics: common efficiency, aptitude, and unreliability.

Common efficiency captured how nicely a mannequin did general throughout a number of makes an attempt; aptitude mirrored one of the best outcomes a mannequin may attain, based mostly on its top-scoring outputs; and unreliability measured how a lot these outcomes various, with bigger gaps between finest and worst outcomes indicating much less steady habits.

All scores had been positioned on a 0-100 scale to make sure consistency throughout duties, and metrics computed for every instruction – after which averaged to offer an general image of mannequin efficiency.

Six sharded duties used within the experiments, protecting each programming and pure language technology. Every activity is proven with a fully-specified instruction and its sharded model. Between 90 and 120 directions had been tailored from established benchmarks for every activity.

Contenders and Checks

Within the preliminary simulations (with an estimated price of $5000), 600 directions spanning six duties had been sharded and used to simulate three dialog sorts: full, concat, and sharded. For every mixture of mannequin, instruction, and simulation kind, ten conversations had been run, producing over 200,000 simulations in complete – a schema that made it attainable to seize each general efficiency and deeper measures of aptitude and reliability.

Fifteen fashions had been examined, spanning a variety of suppliers and architectures: the OpenAI fashions GPT-4o (model 2024-11-20), GPT-4o-mini (2024-07-18), GPT-4.1 (2025-04-14), and the pondering mannequin o3 (2025-04-16).

Anthropic fashions had been Claude 3 Haiku (2024-03-07) and Claude 3.7 Sonnet (2025-02-19), accessed through Amazon Bedrock.

Google contributed Gemini 2.5 Flash (preview-04-17) and Gemini 2.5 Professional (preview-03-25). Meta fashions had been Llama 3.1-8B-Instruct and Llama 3.3-70B-Instruct, in addition to Llama 4 Scout-17B-16E, through Collectively AI.

The opposite entries had been OLMo 2 13B, Phi-4, and Command-A, all accessed regionally through Ollama or Cohere API; and Deepseek-R1, accessed via Amazon Bedrock.

For the 2 ‘pondering’ fashions (o3 and R1), token limits had been raised to 10,000 to accommodate longer reasoning chains:

Common efficiency scores for every mannequin throughout six duties: code, database, actions, data-to-text, math, and abstract. Outcomes are proven for 3 simulation sorts: full, concat, and sharded. Fashions are ordered by their common full-setting rating. Shading displays the diploma of efficiency drop from the complete setting, with the ultimate two columns reporting common declines for concat and sharded relative to full.

Concerning these outcomes, the authors state†:

‘At a excessive stage, each mannequin sees its efficiency degrade on each activity when evaluating FULL and SHARDED efficiency, with a mean degradation of -39%. We title this phenomenon Misplaced in Dialog: fashions that obtain stellar (90%+) efficiency within the lab-like setting of fully-specified, single-turn dialog wrestle on the very same duties in a extra reasonable setting when the dialog is underspecified and multi-turn.’

Concat scores averaged 95 % of full, indicating that the efficiency drop within the sharded setting can’t be defined by info loss. Smaller fashions resembling Llama3.1-8B-Instruct, OLMo-2-13B, and Claude 3 Haiku confirmed extra pronounced degradation underneath concat, suggesting that smaller fashions are typically much less strong to rephrasing than bigger ones.

The authors observe†:

‘Surprisingly, extra performant fashions (Claude 3.7 Sonnet, Gemini 2.5, GPT-4.1) get equally misplaced in dialog in comparison with smaller fashions (Llama3.1-8B-Instruct, Phi-4), with common degradations of 30-40%. That is partially as a consequence of metric definitions. Since smaller fashions obtain decrease absolute scores in FULL, they’ve much less scope for degradation than the higher fashions.

‘Briefly, irrespective of how robust an LLM’s single-turn efficiency is, we observe giant efficiency degradations within the multi-turn setting.’

The preliminary check signifies that some fashions held up higher in particular duties: Command-A on Actions, Claude 3.7 Sonnet, and GPT-4.1 on code; and Gemini 2.5 Professional on Knowledge-to-Textual content, indicating that multi-turn means varies by area. Reasoning fashions resembling o3 and Deepseek-R1 fared no higher general, maybe as a result of their longer replies launched extra assumptions, which tended to confuse the dialog.

Reliability

The connection between aptitude and reliability, clear in single-turn simulations, appeared to crumble underneath multi-turn situations. Whereas aptitude declined solely modestly, unreliability doubled on common. Fashions that had been steady in full-format prompts, resembling GPT-4.1 and Gemini 2.5 Professional, turned simply as erratic as weaker fashions like Llama3.1-8B-Instruct or OLMo-2-13B as soon as the instruction was fragmented.

Overview of aptitude and unreliability as proven in a field plot (a), adopted by reliability outcomes from experiments with fifteen fashions (b), and outcomes from the gradual sharding check the place directions had been break up into one to eight shards (c).

Mannequin responses typically various by as a lot as 50 factors on the identical activity, even when nothing new was added, suggesting that the drop in efficiency was not as a consequence of a scarcity of ability, however to the mannequin turning into more and more unstable throughout turns.

The paper states†:

‘[Though] higher fashions are likely to have barely increased multi-turn aptitude, all fashions are likely to have related ranges of unreliability. In different phrases, in multi-turn, underspecified settings, all fashions we check exhibit very excessive unreliability, with efficiency degrading 50 % factors on common between one of the best and worst simulated run for a hard and fast instruction.’

To check whether or not efficiency degradation was tied to the variety of turns, the authors ran a gradual sharding experiment, splitting every instruction into one to eight shards (see right-most column in picture above).

Because the variety of shards elevated, unreliability rose steadily, confirming that even minor will increase in flip rely made fashions extra unstable. Aptitude remained principally unchanged, reinforcing that the difficulty lies in consistency, not functionality.

Temperature Management

A separate set of experiments examined whether or not unreliability was merely a byproduct of randomness. To do that, the authors various the temperature setting of each the assistant and the consumer simulator throughout three values: 1.0, 0.5, and 0.0.

In single-turn codecs like full and concat, decreasing the assistant’s temperature considerably improved reliability, slicing variation by as a lot as 80 %; however within the sharded setting, the identical intervention had little impact:

Unreliability scores for various combos of assistant and consumer temperature throughout full, concat, and sharded settings, with decrease values indicating higher response consistency.

Even when each the assistant and the consumer had been set to zero temperature, unreliability remained excessive, with GPT-4o exhibiting variation round 30 %, suggesting that the instability seen in multi-turn conversations isn’t just stochastic noise, however a structural weak spot in how fashions deal with fragmented enter.

Implications

The authors write of the implications of their findings at uncommon size on the paper’s conclusion, arguing that robust single-turn efficiency doesn’t assure multi-turn reliability, and cautioning towards over-relying on fully-specified benchmarks when evaluating real-world readiness (since such benchmarks masks instability in additional pure, fragmented interactions).

Additionally they recommend that unreliability isn’t just a sampling artifact, however a basic limitation in how present fashions course of evolving enter, they usually recommend that this raises issues for agent frameworks, which depend upon sustained reasoning throughout turns.

Lastly, they argue that multi-turn means must be handled as a core functionality of LLMs, not one thing offloaded to exterior methods.

The authors word that their outcomes doubtless underestimate the true scale of the issue, and draw consideration to the best situations of the check: the consumer simulator of their setup had full entry to the instruction and will reveal shards in an optimum order, which gave the assistant an unrealistically favorable context (in real-world use, customers typically provide fragmented or ambiguous prompts with out figuring out what the mannequin wants to listen to subsequent).

Moreover, the assistant was evaluated instantly after every flip, earlier than the complete dialog unfolded, stopping later confusion or self-contradiction from being penalized, which might in any other case worsen efficiency. These selections, whereas essential for experimental management, imply that the reliability gaps noticed in follow are more likely to be even higher than these reported.

They conclude:

‘[We] consider carried out simulations symbolize a benign testing floor for LLM multi-turn capabilities. Due to the overly simplified situations of simulation, we consider the degradation noticed in experiments is most probably an underestimate of LLM unreliability, and the way steadily LLMs get misplaced in dialog in real-world settings.‘

Conclusion

Anybody who has spent a big period of time with an LLM will doubtless acknowledge the problems formulated right here, from sensible expertise; and most of us, I think about, have intuitively deserted ‘misplaced’ LLM conversations for contemporary ones, within the hope that the LLM might ‘begin over’ and stop to obsess about materials that got here up in a protracted, winding and more and more infuriating alternate.

It is fascinating to notice that throwing extra context on the downside might not essentially resolve it; and certainly, to watch that the paper raises extra questions than it supplies solutions (besides when it comes to methods to skip round the issue).

* Confusingly, that is unrelated to the standard which means of ‘sharding’ in AI.

† Authors’ personal daring emphases.

First printed Monday, Might 12, 2025