As AI workloads splinter throughout the fragmented cloud-infrastructure landspace, enterprises are scrambling to maintain efficiency, governance, and prices in examine. Equinix reckons the reply is in impartial interconnection hubs that simplify last-mile entry, orchestrate distributed infrastructure, and convey inference nearer to customers.

In sum – what to know:

Distributed AI – Coaching, inference, and agent workloads are forcing enterprises into complicated multi-cloud setups and placing interconnect, spine, and entry networks beneath strain.

Impartial material – Equinix proposes a last-mile material to attach department places of work to cloud hubs, and a hub framework to supply a impartial platform to handle AI throughout infrastructure distributors.

Edge inference – Whereas coaching stays centralised, rising agentic and inference workloads are shifting into the cities, growing the significance of interconnection and governance.

We wrote about this yesterday, briefly, on the high of the publication – about how AI is consuming the information middle, and the way networks are on the hook. Central cloud infrastructure is teetering beneath the burden of spiralling workloads, and being distributed east-west into regional backwaters in quest of energy, and north-south within the metro facilities and enterprise premises in an pressing quest to really put AI to work. Enterprises are, instantly, stitching collectively coaching in a single cloud, inference in one other, and brokers on the edge – all with out breaking efficiency budgets. Which is why networks matter greater than ever. So right here is the 12-inch remix, as informed to RCR by US knowledge centre supplier Equinix.

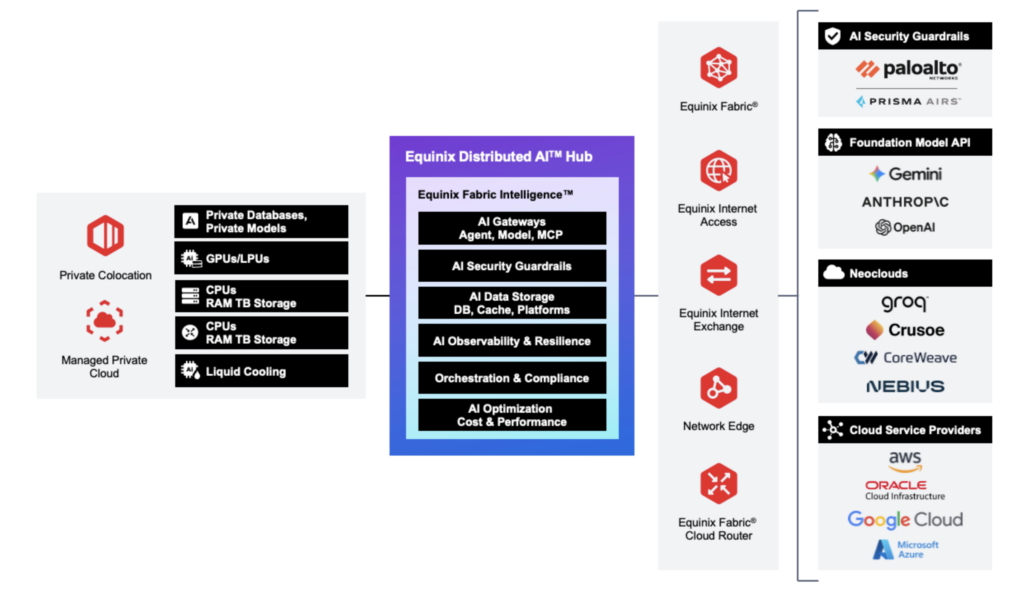

The dialog comes on the again of a few current service bulletins from the agency: a last-mile entry service (Equinix Material), introduced in January, and a associated neutrality platform (Distributed AI Hub), introduced yesterday (March 11). The purpose of each is to simplify the rapidly-fragmenting cloud/edge compute market as provide offers multiply and workloads cascade – whether or not to safe scarce-new GPU capability for coaching or to serve new tough-new necessities for inferencing. The last-mile resolution is designed to unravel protection and contract points with telecom suppliers, largely at enterprise department places of work, by aggregating and automating native connectivity providers.

The opposite presents a “unified framework” to navigate the “maze of silos” being erected by the broad AI ecosystem as its expertise has morphed, its utility has multiplied, and its infrastructure has exploded and scattered. “AI isn’t centralized – however the precise infrastructure could make it run as seamlessly as if it have been.” That’s the road in its press launch; the pitch from Equinix is that it has all the time been a impartial supplier, internet hosting and shuffling enterprise knowledge to and from massive public hyperscale-clouds, as required – and now additionally, for 12 months no less than, to a bunch of frontier mannequin builders and a swarm of specialist neo-clouds.

The ‘material’ proposition makes the final telco-mile work extra like a standard hyper-scale cloud service, faster to seek out and simpler to deploy; the hub framework does the identical, successfully, with the gross unwieldiness of the growing AI ecosystem so its fragmenting infrastructure scales as one – and so Equinix can reinforce its neutrality place. Arun Dev, vp of digital interconnection at Equinix, is on the top of the road, and explains each. The method for an enterprise to manually kind last-mile connectivity with a telco – to an workplace in Omaha, Nebraska, for instance – “might take months”, he says. Equinix serves 77 metro facilities, however not Omaha – instantly, anyway.

Community aggregator

The enterprise wants an area repair to get entry into the distributed compute grid – seemingly right into a facility alongside ‘knowledge middle alley’ in Ashburn, Virginia. Dev says: “You’ll be able to arrange cloud connectivity in minutes with fundamental abilities. That’s what enterprises are used to. After which they should take care of a community supplier, and the quoting, ordering, provisioning takes weeks or months.

“They’ve obtained their cloud arrange, they usually’re ready on these further steps. It’s a number of friction – Omaha to Ashburn, to a workload on AWS.” As such, Equinix is working with an aggregator platform from Resolute CS to automate the last-mile telco move – with the likes of AT&T, Lumen, T-Cell, Verizon, Zayo within the US.

“All the foremost suppliers are on the platform. Put in an deal with in Edinburgh and it exhibits the native carriers, and automates quoting and ordering. So we will meet prospects the place they’re, anyplace – and simplify that [last-mile] expertise for them.” The system has processed a “massive variety of quotes” to this point, since January; “most” in APAC and in Europe, he says.

Equinix is constructing tighter first-party integrations, as effectively, with carriers in its “main markets” – so an enterprise would possibly decide BT within the UK, say, to “gooffice-to-cloud, end-to-end – with out the aggregator”. However even to AWS in Ashburn, the proposition is to converge at an area Equinix on-ramp facility, as a impartial interconnection hub.

Which is the rationale behind its different announcement, yesterday. Similar logic, wider scope – that enterprises are spreading workloads throughout fragmented infrastructure: private and non-private, open and closed, worldwide and nationwide, central and native. Outdoors of the parochial entry community, the problem is about visibility and management throughout the entire piece – across the move of knowledge, quantity of leakage, burden of compliance, utility of guardrails. In the long run, the everyway-impact on value in such a fluid knowledge structure is tough to handle. “You realize about shadow IT,” he says. “Nicely, shadow AI is actual.” It’s nonetheless about networks; simply extra concerning the orchestration between them.

Equinix calls its hub proposition a “impartial location that permits enterprises to find, hook up with, and eat AI infrastructure – together with [from] mannequin firms, GPU clouds, knowledge platforms, community and safety providers”. These are delivered “all by personal, low-latency connectivity” at its (280) knowledge facilities, it says. Dev doesn’t say a lot about this “low-latency (inter)connectivity” – of the type supplied in programmable fiber spine methods, as mentioned in these pages lately in protection of Verizon, Cisco, Nokia, Lumen, Ciena, plus all of the stuff out of PTC – besides to say the identical suppliers on its aggregator platform are “in our services”.

Neutrality idea

Besides additionally, that the community is extra crucial than ever. “That’s the place our deep partnerships with service suppliers are available in,” he says. However the hub framework is extra broadly about how infrastructure is ruled and orchestrated – utilizing its knowledge facilities and community material as a management level for distributed AI workloads. Its first integration is with Palo Alto Networks (Prisma AI) about governance and safety throughout distributed methods; extra will comply with by the yr. Hyperscaler marketplaces direct enterprises to hyperscale instruments; the Equinix framework is vendor-neutral, giving prospects freedom to compose their AI stack – which they’re being compelled to do anyway, argues Dev.

“The GPU scarcity means they comply with the provision, through a neo-cloud or one other hyperscaler. So their workload is on cloud A and their GPU capabilities are in cloud B,” he says. As a degree of observe, the neutrality idea is predicated on the concept that enterprises meet the hyperscalers in shared services. Amazon Internet Companies (AWS), Microsoft Azure, Oracle Cloud Infrastructure (OCI), and Google Cloud (GCP) maintain infrastructure in Equinix knowledge centres in all its 77 ‘on-ramp’ metro stops – so a specific payload throughout a last-mile hyperlink in Omaha is correctly directed in Ashburn, based on the work element. Greater than 40 % of worldwide cloud on-ramps are in Equinix services, says Dev.

He goes on: “It drives this requirement to make distributed infrastructure work. As a result of your workload is cut up throughout places. And if your entire knowledge is in cloud A and all of your compute is in cloud B, then you might be shifting knowledge backward and forward, and also you get hit with egress speeds on each side. So enterprises have determined the most effective factor is to have their knowledge in a impartial location, and use the compute in A and the workflow in B through the interconnection piece. I imply, that’s the entire evolution over the past 10 years: going from the place all the things moved to the general public cloud, to placing the precise workload in the precise place, exacerbated by a scarcity of GPUs – and, now, determining make that work.”

However it isn’t nearly GPU shortages in east-west interconnect methods for coaching workloads; AI brokers are migrating north-south to the sting for inference duties, additionally forcing enterprises to search for neutral-host metro websites. Analyst agency IDC reckons 80 % of enterprises will deploy distributed edge infrastructure to enhance the latency and responsiveness of AI purposes as quickly as 2027; Omdia says agentic AI initiatives will see 175 % annual development (CAGR) by 2029. “Clients say, ‘Hey, nice, I’ve completed my coaching, however my inference is on the metro edge’,” says Dev.

Metropolitan edge

Which is the place Equinix is, we all know – in 77 “main metro markets,” says Dev. He goes on: “We’ve a standardized footprint internationally. Our co-location footprint in Singapore appears the identical in Ashburn, Frankfurt, in every single place.” As effectively, enterprises are usually not required to put in bodily infrastructure to broaden to new areas. “We’ve a virtualized stack. So that you don’t all the time should deploy bodily gear,” he says. “If you wish to broaden into India, say, you’ll be able to deploy digital capabilities from us in Mumbai. You’ll get the identical expertise you get in Ashburn and London.” For inference workloads specifically, enterprises are more and more deploying digital infrastructure throughout metropolitan markets slightly than delivery tools into each location.

Information sovereignty, amped-up by dicey geopolitics, feeds into this helter-skelter AI administration, as effectively. Equinix affords “jurisdiction-aware” steering so site visitors on digital providers and colocation routes stays inside borders. “We will maintain all site visitors in Canada, say, so nothing goes to the US.” As a rule, enterprises maintain their largest bodily deployments in hubs corresponding to Ashburn or London, whereas utilizing digital community features – firewalls, routers, cloud connectivity from the likes of Palo Alto Networks, Cisco, Juniper Networks, Zscaler, variously – to increase into harder-to-reach markets. “A big US financial institution was up and operating with a digital setup in Mumbai in beneath every week,” he says.

The query of the place AI brokers will take up residence is unsettled. “The entire above,” says Dev, when introduced with all of the stops on the cloud-edge continuum. For now, it will depend on the service supplier, and the place their sources are distributed. “Anthropic runs the place Anthropic runs; an open LLaMA mannequin may be deployed on-prem; Mistral AI will route site visitors by its places in France. Which is why the complexity of the site visitors move has exploded – versus the state of affairs 10 years in the past.” However AI service suppliers can even comply with the science, in the end, governing the three-way equilibrium between utility necessities, technological innovation, and infrastructure viability.

However the logic is obvious: large-scale mannequin coaching would possibly stay extremely centralized (“you are able to do all of your coaching in West Texas, the place there are farms and nothing else”), however inference workloads will transfer nearer to the motion – in manufacturing unit methods, retail purposes, monetary providers with stricter response occasions. Equinix’s metro footprint – together with into new international locations like Thailand, Malaysia, Indonesia – helps the shift, says Dev.

He rounds off: “It was once easy: get to your Equinix co-location, get to AWS, get to Azure. That was it. Now I’ve obtained a LLaMA occasion, Anthropic over there, my very own mannequin right here, a manufacturing unit elsewhere. How do you’ve visibility? How do you construct connectivity? That agentic site visitors is growing considerably – north-south, some east-west. These are smaller requests, however there are many them. The complexity has multiplied considerably.”