Frequent streaming information enrichment patterns in Amazon Managed Service for Apache FlinkStream information processing lets you act on information in actual time. Actual-time information analytics may also help you’ve on-time and optimized responses whereas bettering total buyer expertise.

Apache Flink is a distributed computation framework that permits for stateful real-time information processing. It offers a single set of APIs for constructing batch and streaming jobs, making it straightforward for builders to work with bounded and unbounded information. Apache Flink offers totally different ranges of abstraction to cowl quite a lot of occasion processing use circumstances.

Amazon Managed Service for Apache Flink (Amazon MSF) is an AWS service that gives a serverless infrastructure for operating Apache Flink functions. This makes it straightforward for builders to construct extremely obtainable, fault tolerant, and scalable Apache Flink functions with no need to develop into an professional in constructing, configuring, and sustaining Apache Flink clusters on AWS.

Information streaming workloads usually require information within the stream to be enriched by way of exterior sources (reminiscent of databases or different information streams). For instance, assume you might be receiving coordinates information from a GPS system and want to know how these coordinates map with bodily geographic areas; you have to enrich it with geolocation information. You should utilize a number of approaches to counterpoint your real-time information in Amazon MSF in your use case and Apache Flink abstraction stage. Every technique has totally different results on the throughput, community visitors, and CPU (or reminiscence) utilization. On this submit, we cowl these approaches and focus on their advantages and disadvantages.

Information enrichment patterns

Information enrichment is a course of that appends extra context and enhances the collected information. The extra information usually is collected from quite a lot of sources. The format and the frequency of the info updates might vary from as soon as in a month to many instances in a second. The next desk reveals a number of examples of various sources, codecs, and replace frequency.

| Information | Format | Replace Frequency |

| IP tackle ranges by nation | CSV | As soon as a month |

| Firm group chart | JSON | Twice a yr |

| Machine names by ID | CSV | As soon as a day |

| Worker data | Desk (Relational database) | A number of instances a day |

| Buyer data | Desk (Non-relational database) | A number of instances an hour |

| Buyer orders | Desk (Relational database) | Many instances a second |

Primarily based on the use case, your information enrichment utility could have totally different necessities when it comes to latency, throughput, or different components. The rest of the submit dives deeper into totally different patterns of information enrichment in Amazon MSF, that are listed within the following desk with their key traits. You may select the very best sample primarily based on the trade-off of those traits.

| Enrichment Sample | Latency | Throughput | Accuracy if Reference Information Adjustments | Reminiscence Utilization | Complexity |

| Pre-load reference information in Apache Flink Job Supervisor reminiscence | Low | Excessive | Low | Excessive | Low |

| Partitioned pre-loading of reference information in Apache Flink state | Low | Excessive | Low | Low | Low |

| Periodic Partitioned pre-loading of reference information in Apache Flink state | Low | Excessive | Medium | Low | Medium |

| Per-record asynchronous lookup with unordered map | Medium | Medium | Excessive | Low | Low |

| Per-record asynchronous lookup from an exterior cache system | Low or Medium (Relying on Cache storage and implementation) | Medium | Excessive | Low | Medium |

| Enriching streams utilizing the Desk API | Low | Excessive | Excessive | Low – Medium (relying on the chosen be part of operator) | Low |

Enrich streaming information by pre-loading the reference information

When the reference information is small in dimension and static in nature (for instance, nation information together with nation code and nation title), it’s beneficial to counterpoint your streaming information by pre-loading the reference information, which you are able to do in a number of methods.

To see the code implementation for pre-loading reference information in numerous methods, discuss with the GitHub repo. Comply with the directions within the GitHub repository to run the code and perceive the info mannequin.

Pre-loading of reference information in Apache Flink Job Supervisor reminiscence

The best and likewise quickest enrichment technique is to load the enrichment information into every of the Apache Flink job managers’ on-heap reminiscence. To implement this technique, you create a brand new class by extending the RichFlatMapFunction summary class. You outline a worldwide static variable in your class definition. The variable might be of any sort, the one limitation is that it ought to lengthen java.io.Serializable; for instance, java.util.HashMap. Throughout the open() technique, you outline a logic that masses the static information into your outlined variable. The open() technique is all the time referred to as first, throughout the initialization of every job in Apache Flink’s job managers, which makes certain the entire reference information is loaded earlier than the processing begins. You implement your processing logic by overriding the processElement() technique. You implement your processing logic and entry the reference information by its key from the outlined international variable.

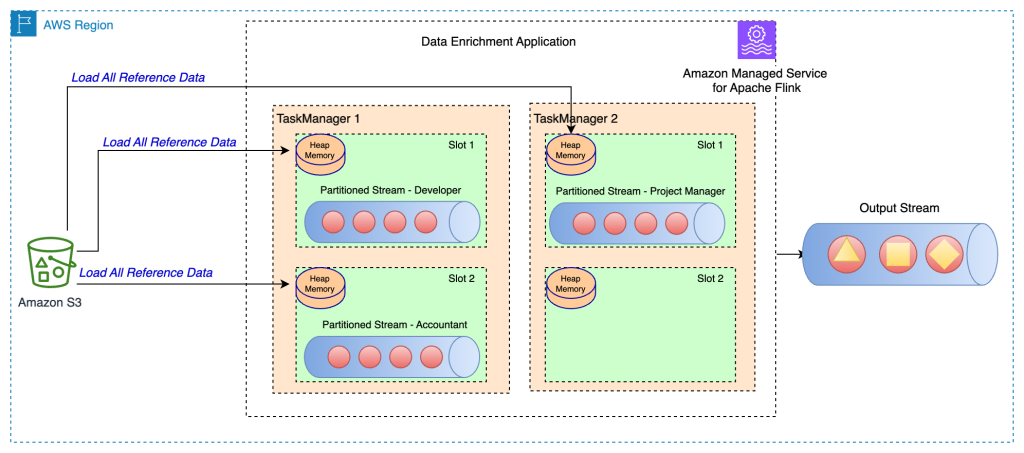

The next structure diagram reveals the complete reference information load in every job slot of the duty supervisor:

This technique has the next advantages:

- Straightforward to implement

- Low latency

- Can assist excessive throughput

Nevertheless, it has the next disadvantages:

- If the reference information is massive in dimension, the Apache Flink job supervisor could run out of reminiscence.

- Reference information can develop into stale over a time frame.

- A number of copies of the identical reference information are loaded in every job slot of the duty supervisor.

- Reference information must be small to slot in the reminiscence allotted to a single job slot. In Amazon MSF, every Kinesis Processing Unit (KPU) has 4 GB of reminiscence, out of which 3 GB can be utilized for heap reminiscence. If

ParallelismPerKPUin Amazon MSF is about to 1, one job slot runs in every job supervisor, and the duty slot can use the entire 3 GB of heap reminiscence. IfParallelismPerKPUis about to a worth higher than 1, the three GB of heap reminiscence is distributed throughout a number of job slots within the job supervisor. In case you’re deploying Apache Flink in Amazon EMR or in a self-managed mode, you may tunetaskmanager.reminiscence.job.heap.dimensionto extend the heap reminiscence of a job supervisor.

Partitioned pre-loading of reference information in Apache Flink State

On this strategy, the reference information is loaded and saved within the Apache Flink state retailer at the beginning of the Apache Flink utility. To optimize the reminiscence utilization, first the principle information stream is split by a specified subject by way of the keyBy() operator throughout all job slots. Moreover, solely the portion of the reference information that corresponds to every job slot is loaded within the state retailer.That is achieved in Apache Flink by creating the category PartitionPreLoadEnrichmentData, extending the RichFlatMapFunction summary class. Throughout the open technique, you override the ValueStateDescriptor technique to create a state deal with. Within the referenced instance, the descriptor is known as locationRefData, the state key sort is String, and the worth sort is Location. On this code, we use ValueState in comparison with MapState as a result of we solely maintain the placement reference information for a specific key. For instance, after we question Amazon S3 to get the placement reference information, we question for the particular function and get a specific location as a worth.

In Apache Flink, ValueState is used to carry a particular worth for a key, whereas MapState is used to carry a mix of key-value pairs. This method is beneficial when you’ve a big static dataset that’s tough to slot in reminiscence as an entire for every partition.

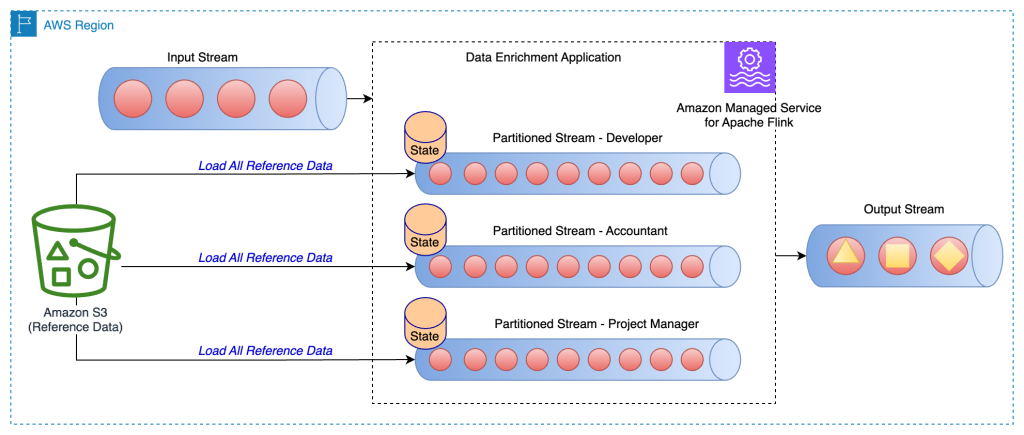

The next structure diagram reveals the load of reference information for the particular key for every partition of the stream.

For instance, our reference information within the pattern GitHub code has roles that are mapped to every constructing. As a result of the stream is partitioned by roles, solely the particular constructing data per function is required to be loaded for every partition because the reference information.This technique has the next advantages:

- Low latency.

- Can assist excessive throughput.

- Reference information for particular partition is loaded within the keyed state.

- In Amazon MSF, the default state retailer configured is RocksDB. RocksDB can make the most of a good portion of 1 GB of managed reminiscence and 50 GB of disk area supplied by every KPU. This offers sufficient room for the reference information to develop.

Nevertheless, it has the next disadvantages:

- Reference information can develop into stale over a time frame

Periodic partitioned pre-loading of reference information in Apache Flink State

This strategy is a fine-tune of the earlier method, the place every partitioned reference information is reloaded on a periodic foundation to refresh the reference information. That is helpful in case your reference information modifications sometimes.

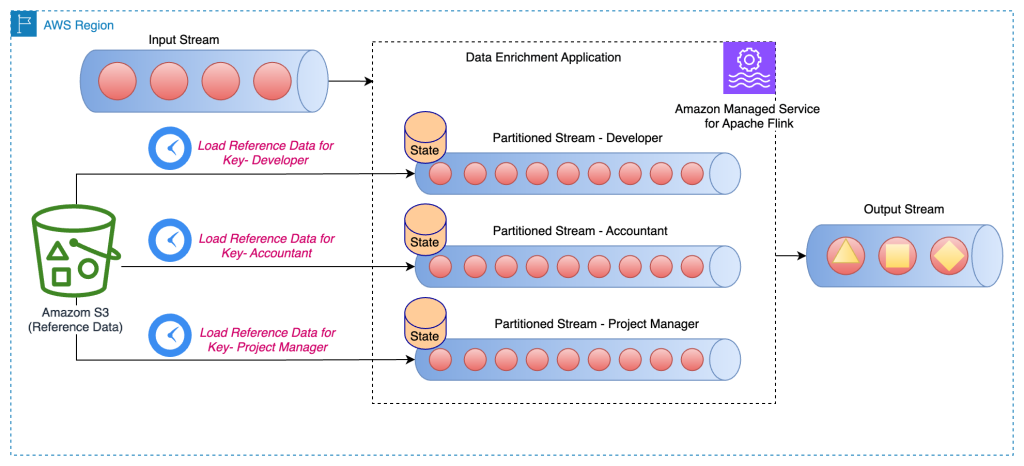

The next structure diagram reveals the periodic load of reference information for the particular key for every partition of the stream:

On this strategy, the category PeriodicPerPartitionLoadEnrichmentData is created, extending the KeyedProcessFunction class. Just like the earlier sample, within the context of the GitHub instance, ValueState is beneficial right here as a result of every partition solely masses a single worth for the important thing. In the identical means as talked about earlier, within the open technique, you outline the ValueStateDescriptor to deal with the worth state and outline a runtime context to entry the state.

Throughout the processElement technique, load the worth state and connect the reference information (within the referenced GitHub instance, we hooked up buildingNo to the client information). Additionally register a timer service to be invoked when the processing time passes the given time. Within the pattern code, the timer service is scheduled to be invoked periodically (for instance, each 60 seconds). Within the onTimer technique, replace the state by making a name to reload the reference information for the particular function.

This technique has the next advantages:

- Low latency.

- Can assist excessive throughput.

- Reference information for particular partitions is loaded within the keyed state.

- Reference information is refreshed periodically.

- In Amazon MSF, the default state retailer configured is RocksDB. Additionally, 50 GB of disk area supplied by every KPU. This offers sufficient room for the reference information to develop.

Nevertheless, it has the next disadvantages:

- If the reference information modifications continuously, the applying nonetheless has stale information relying on how continuously the state is reloaded

- The appliance can face load spikes throughout reload of reference information

Enrich streaming information utilizing per-record lookup

Though pre-loading of reference information offers low latency and excessive throughput, it will not be appropriate for sure varieties of workloads, reminiscent of the next:

- Reference information updates with excessive frequency

- Apache Flink must make an exterior name to compute the enterprise logic

- Accuracy of the output is essential and the applying shouldn’t use stale information

Usually, for all these use circumstances, builders trade-off excessive throughput and low latency for information accuracy. On this part, you find out about a number of of frequent implementations for per-record information enrichment and their advantages and downsides.

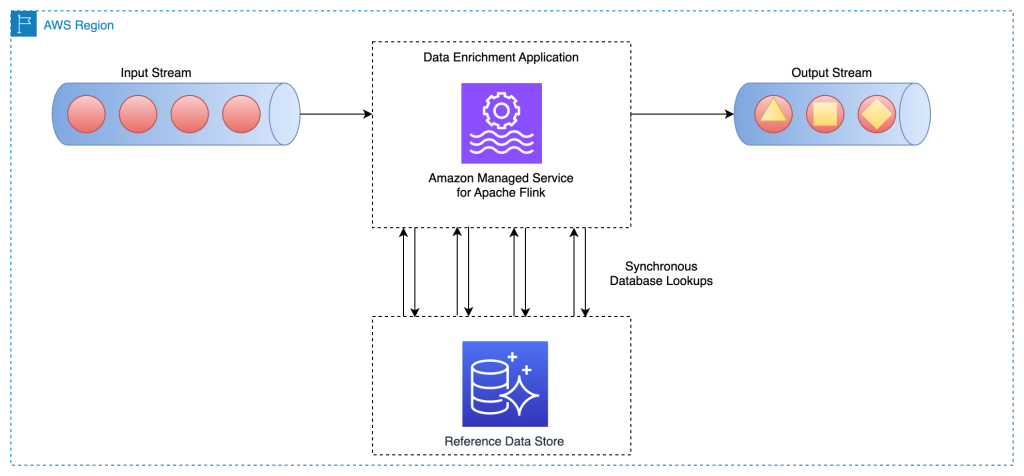

Per-record asynchronous lookup with unordered map

In a synchronous per-record lookup implementation, the Apache Flink utility has to attend till it receives the response after sending each request. This causes the processor to remain idle for a big interval of processing time. As a substitute, the applying can ship a request for different parts within the stream whereas it waits for the response for the primary factor. This manner, the wait time is amortized throughout a number of requests and due to this fact it will increase the method throughput. Apache Flink offers asynchronous I/O for exterior information entry. Whereas utilizing this sample, it’s important to determine between unorderedWait (the place it emits the outcome to the subsequent operator as quickly because the response is acquired, disregarding the order of the factor on the stream) and orderedWait (the place it waits till all inflight I/O operations full, then sends the outcomes to the subsequent operator in the identical order as authentic parts had been positioned on the stream). Often, when downstream shoppers disregard the order of the weather within the stream, unorderedWait offers higher throughput and fewer idle time. Go to Enrich your information stream asynchronously utilizing Managed Service for Apache Flink to be taught extra about this sample.

The next structure diagram reveals how an Apache Flink utility on Amazon MSF does asynchronous calls to an exterior database engine (for instance Amazon DynamoDB) for each occasion in the principle stream:

This technique has the next advantages:

- Nonetheless moderately easy and simple to implement

- Reads probably the most up-to-date reference information

Nevertheless, it has the next disadvantages:

- It generates a heavy learn load for the exterior system (for instance, a database engine or an exterior API) that hosts the reference information

- General, it won’t be appropriate for programs that require excessive throughput with low latency

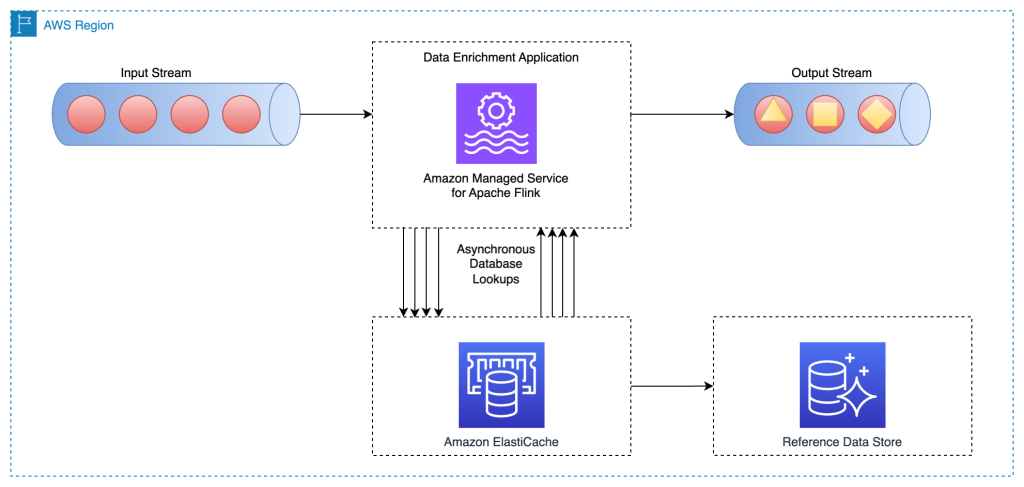

Per-record asynchronous lookup from an exterior cache system

A technique to improve the earlier sample is to make use of a cache system to reinforce the learn time for each lookup I/O name. You should utilize Amazon ElastiCache for caching, which accelerates utility and database efficiency, or as a main information retailer to be used circumstances that don’t require sturdiness like session shops, gaming leaderboards, streaming, and analytics. ElastiCache is suitable with Redis and Memcached.

For this sample to work, you could implement a caching sample for populating information within the cache storage. You may select between a proactive or reactive strategy relying your utility aims and latency necessities. For extra data, discuss with Caching patterns.

The next structure diagram reveals how an Apache Flink utility calls to learn the reference information from an exterior cache storage (for instance, Amazon ElastiCache for Redis). Information modifications should be replicated from the principle database (for instance, Amazon Aurora) to the cache storage by implementing one of many caching patterns.

Implementation for this information enrichment sample is just like the per-record asynchronous lookup sample; the one distinction is that the Apache Flink utility makes a connection to the cache storage, as a substitute of connecting to the first database.

This technique has the next advantages:

- Higher throughput as a result of caching can speed up utility and database efficiency

- Protects the first information supply from the learn visitors created by the stream processing utility

- Can present decrease learn latency for each lookup name

- General, won’t be appropriate for medium to excessive throughput programs that need to enhance information freshness

Nevertheless, it has the next disadvantages:

- Extra complexity of implementing a cache sample for populating and syncing the info between the first database and the cache storage

- There’s a probability for the Apache Flink stream processing utility to learn stale reference information relying on what caching sample is carried out

- Relying on the chosen cache sample (proactive or reactive), the response time for every enrichment I/O could differ, due to this fact the general processing time of the stream might be unpredictable

Alternatively, you may keep away from these complexities through the use of the Apache Flink JDBC connector for Flink SQL APIs. We focus on enrichment stream information by way of Flink SQL APIs in additional element later on this submit.

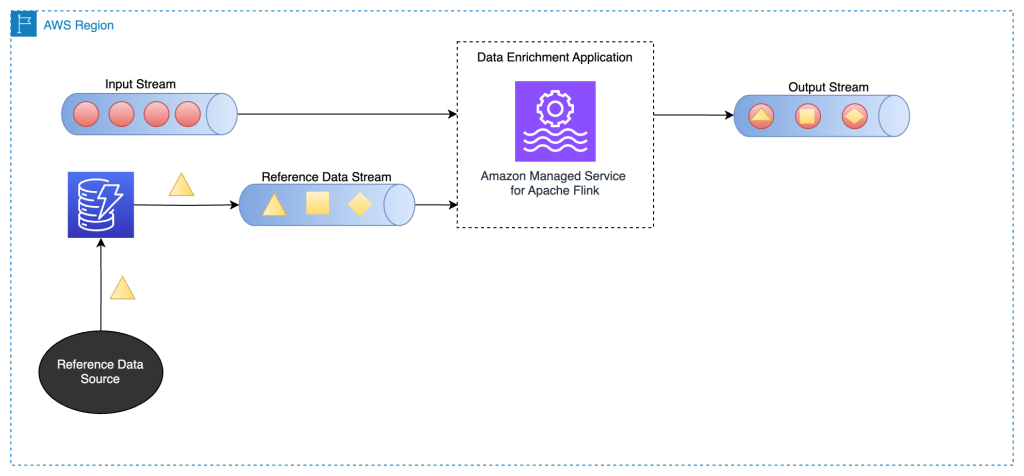

Enrich stream information by way of one other stream

On this sample, the info in the principle stream is enriched with the reference information in one other information stream. This sample is nice to be used circumstances through which the reference information is up to date continuously and it’s attainable to carry out change information seize (CDC) and publish the occasions to an information streaming service reminiscent of Apache Kafka or Amazon Kinesis Information Streams. This sample is beneficial within the following use circumstances, for instance:

- Buyer buy orders are printed to a Kinesis information stream, after which be part of with buyer billing data in a DynamoDB stream

- Information occasions captured from IoT units ought to enrich with reference information in a desk in Amazon Relational Database Service (Amazon RDS)

- Community log occasions ought to enrich with the machine title on the supply (and the vacation spot) IP addresses

The next structure diagram reveals how an Apache Flink utility on Amazon MSF joins information in the principle stream with the CDC information in a DynamoDB stream.

To complement streaming information from one other stream, we use a standard stream to stream be part of patterns, which we clarify within the following sections.

Enrich streams utilizing the Desk API

Apache Flink Desk APIs present increased abstraction for working with information occasions. With Desk APIs, you may outline your information stream as a desk and connect the info schema to it.

On this sample, you outline tables for every information stream after which be part of these tables to attain the info enrichment targets. Apache Flink Desk APIs assist various kinds of be part of situations, like inside be part of and outer be part of. Nevertheless, you need to keep away from these in the event you’re coping with unbounded streams as a result of these are useful resource intensive. To restrict the useful resource utilization and run joins successfully, you must use both interval or temporal joins. An interval be part of requires one equi-join predicate and a be part of situation that bounds the time on either side. To higher perceive tips on how to implement an interval be part of, discuss with Get began with Amazon Managed Service for Apache Flink (Desk API).

In comparison with interval joins, temporal desk joins don’t work with a time interval inside which totally different variations of a report are saved. Data from the principle stream are all the time joined with the corresponding model of the reference information on the time specified by the watermark. Due to this fact, fewer variations of the reference information stay within the state. Be aware that the reference information could or could not have a time factor related to it. If it doesn’t, chances are you’ll want so as to add a processing time factor for the be part of with the time-based stream.

Within the following instance code snippet, the update_time column is added to the currency_rates reference desk from the change information seize metadata reminiscent of Debezium. Moreover, it’s used to outline a watermark technique for the desk.

CREATE TABLE currency_rates (

foreign money STRING,

conversion_rate DECIMAL(32, 2),

update_time TIMESTAMP(3) METADATA FROM `values.supply.timestamp` VIRTUAL,

WATERMARK FOR update_time AS update_time,

PRIMARY KEY(foreign money) NOT ENFORCED

) WITH (

'connector' = 'kafka',

'worth.format' = 'debezium-json',

/* ... */

);

This technique has the next advantages:

- Straightforward to implement

- Low latency

- Can assist excessive throughput when reference information is an information stream

SQL APIs present increased abstractions over how the info is processed. For extra complicated logic round how the be part of operator ought to course of, we advocate you all the time begin with SQL APIs first and use DataStream APIs if you really want to.

Conclusion

On this submit, we demonstrated totally different information enrichment patterns in Amazon MSF. You should utilize these patterns and discover the one which addresses your wants and rapidly develop a stream processing utility.

For additional studying on Amazon MSF, go to the official product web page.

In regards to the Authors